Using Claude Code on your phone

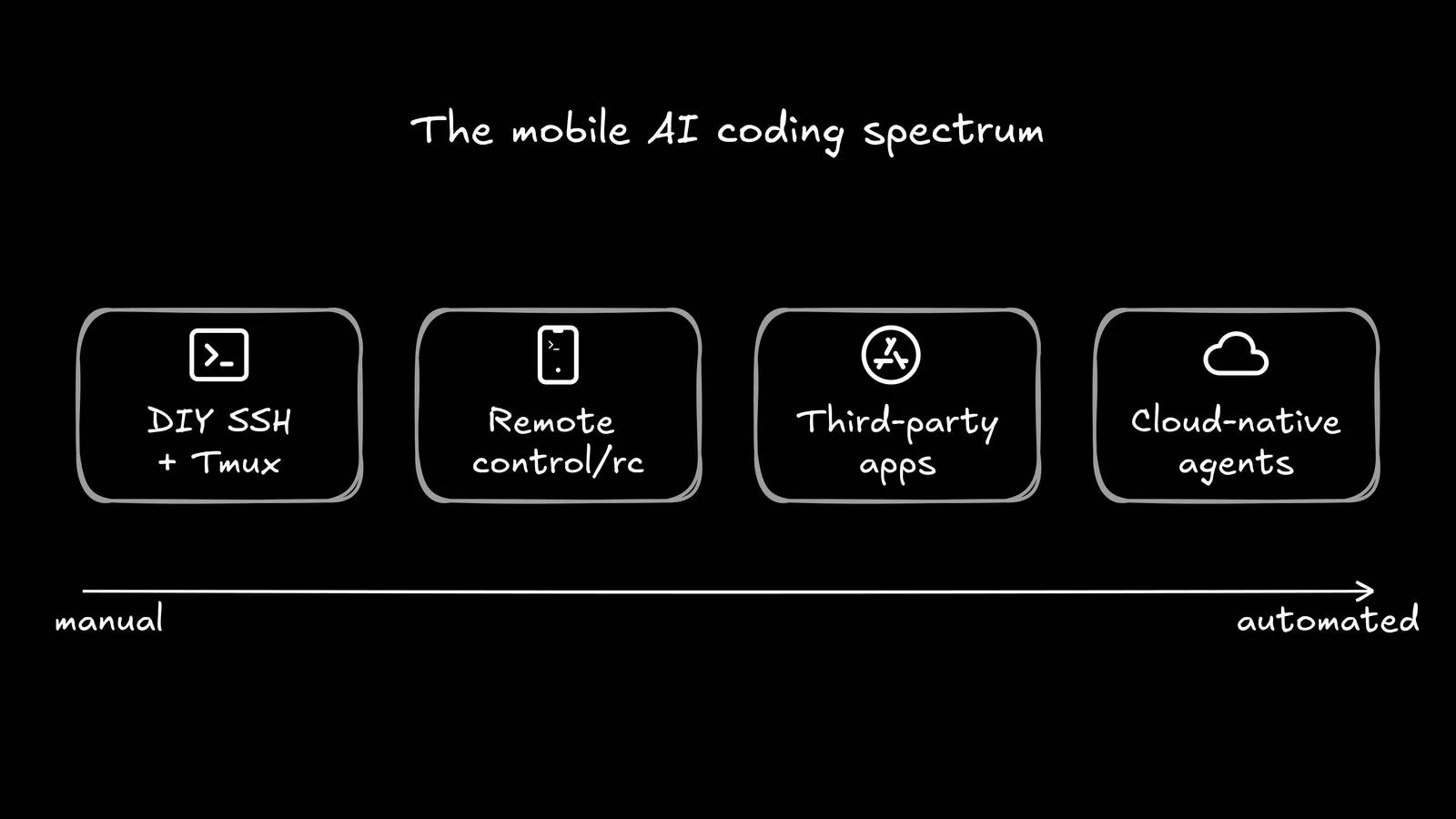

The internet responded with a host of alternatives: SSH tunnels, third-party support applications, one-click cloud deployment, and Anthropic's official Remote Control feature. There's no shortage of ways to use Claude Code on your phone.

The dream of programming from a phone has been a popular online trend for years. "I'll connect via SSH from the beach" is a phrase every programmer has said at least once before angrily shutting down Termux and storming off to the beach.

But AI agents have just brought this dream closer to reality than ever before. With 29 million VS Code extension installations per day and annual recurring revenue (ARR) exceeding $2.5 billion, Claude Code's growth is incredible, meaning developers are building more applications from the terminal than ever before. Naturally, they want that power everywhere, including on the sofa, on the commute, and even at the beach.

The internet responded with a host of alternatives: SSH tunnels, third-party support applications, one-click cloud deployment, and Anthropic's official Remote Control feature. There's no shortage of ways to use Claude Code on your phone.

This article will examine all the main methods, explain their advantages and disadvantages, and then pose the tough question: Is a command-line interface on a 6-inch screen really suitable for AI agents ?

DIY method: SSH + Tailscale + Tmux

First, we have the traditional method for programming on mobile devices. The architecture is as follows:

- Keep a desktop running Claude Code at home.

- Use Tailscale to create a private network between your desktop and your phone.

- Install Termux (Android) or Blink (iOS) to get a real command line.

- Connect to your desktop via SSH.

- Use tmux (or the zellij app ) to maintain your session when your phone is locked.

In short, it's as follows:

# Trên desktop npm install -g @anthropics/claude-code sudo apt install tmux curl -fsSL | sh sudo tailscale up # Trên điện thoại (Termux) pkg update && pkg install openssh ssh your-username@100.64.0.5 tmux new -s code claude The installation process takes about 20 minutes if everything goes smoothly, and then you can run a full Claude Code session from your phone. You get full Unix power, no third-party dependencies other than Tailscale, and it works with any CLI tools you're already using.

But this is where things get tricky. SSH disconnects as soon as your phone goes into sleep mode or switches from WiFi to cellular. Mosh can help improve connectivity, but it adds another layer of configuration. And let's be honest about the user experience: You're debugging code on a very small screen.

If managing an agent from a laptop makes you feel like a real system administrator, then managing it from your phone via SSH will be a real headache.

Claude Code Remote Control: The Official Solution

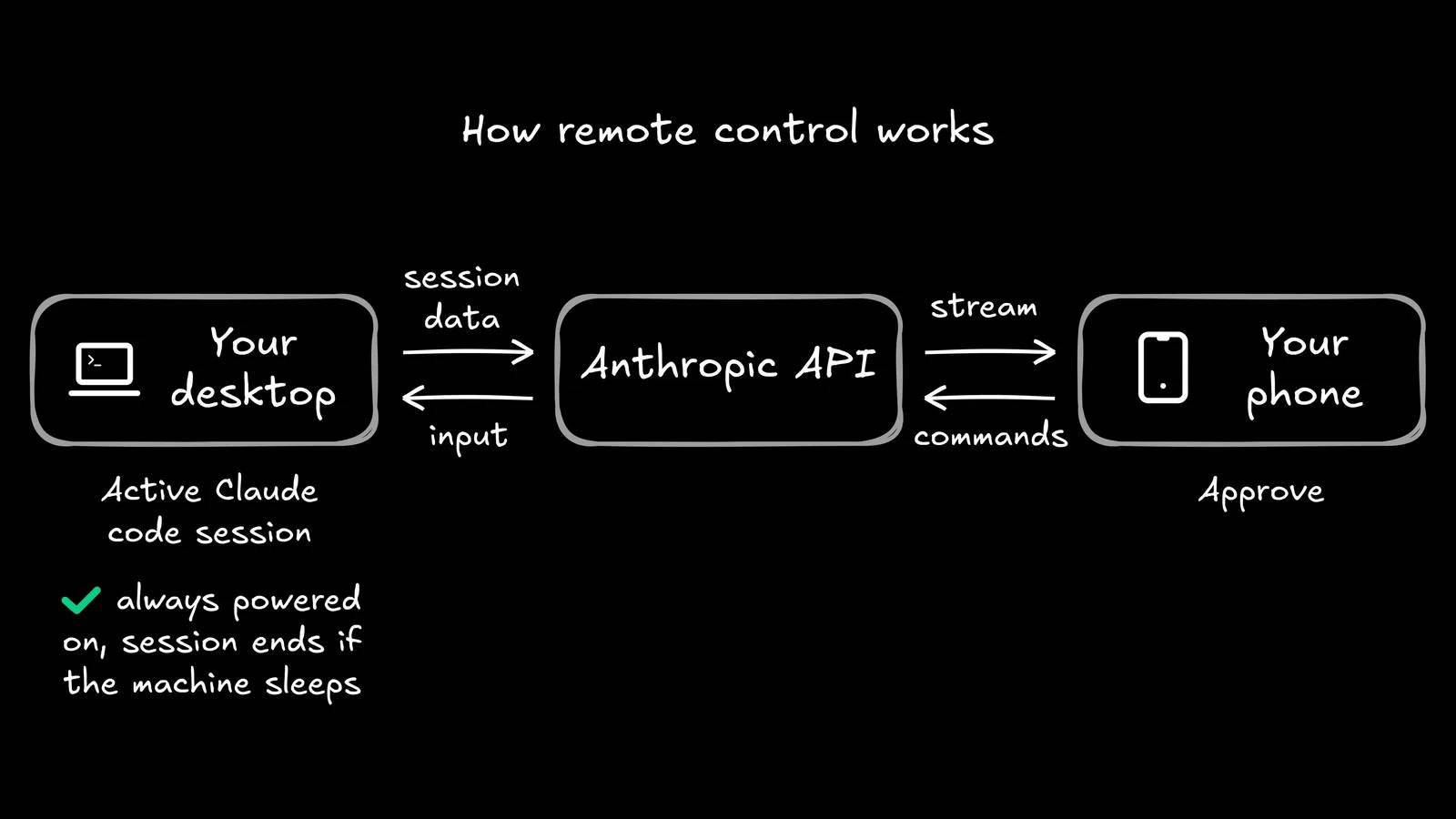

On February 25, 2026, Anthropic released its Remote Control feature. It's neat, official, and takes only about 5 seconds to set up.

From any active Claude Code session, run the command /rc (or Claude remote-control ), and you'll get a QR code. Scan that code with the Claude app on your phone, and you'll have full control of the session—the same files, the same MCP server, the same project context. You can leave your desk, and your agent will continue running.

The security model is very robust: No incoming ports are open on your machine, everything is routed through Anthropic's API with TLS and temporary credentials, and sessions automatically reconnect after network disconnections (in about 10 minutes). It's a neat solution to a real problem.

For many developers, Remote Control is an ideal solution. You start a restructuring task before dinner, scan the QR code, and monitor it from the sofa. The "spontaneous idea → programmed while drinking coffee" process has now become a reality, at least in theory.

But there are a few things worth noting:

- Your desktop must always be powered on and the terminal window must always be open, so your laptop battery life is now a factor dependent on the infrastructure.

- You can only run one Remote Control session at a time.

- You cannot start sessions from a mobile device; you can only continue sessions you have already started.

- This feature is currently in Research Preview and is only available for the Max plan ($100-$200/month), with Pro access coming soon and no timeline yet for Team or Enterprise plans.

The feedback from developers has been enthusiastic but also noteworthy. Everyone enjoys monitoring long-running tasks from the comfort of their sofa. But the most common request on X wasn't "improved Remote Control" but "allowing you to start work sessions from your phone." As one developer put it: "I don't think you understand how many startups will die when Anthropic fixes the Claude Code tab bug in the mobile app." Remote Control is a great remote viewing tool. But it's not a mobile-first workflow.

Third-party applications and cloud deployments

Beyond the DIY and official methods, an entire ecosystem has flourished:

- Mobile IDE for Claude Code (iOS) : A companion app that synchronizes prompts and results between your iPhone and Mac via CloudKit. One free prompt per day, or pay for unlimited use.

- Railway's claude-code-ssh template : Cloud deployment with just one click, providing you with an always-on container that you can SSH into from anywhere. Your laptop can finally sleep.

- Vibe Companion : Stan Girard discovered a hidden -sdk-url flag in Claude Code's binary and built an open-source web UI around it. Mobile programming from your browser, no app needed.

- Takopi : Route Claude Code through Telegram, turning your messaging app into a programming interface.

These are all innovative solutions. But they all share the assumption that the local terminal is the appropriate interface for this type of work. And when the developer's role shifts from writing code to coordinating code-writing agents, that assumption deserves to be questioned.

The real question is: Is a command-line interface the right choice?

Let's take a broader view. We've just listed five different ways to bring the command-line interface to phones. That's a lot of engineering effort geared towards a single user experience: Command Line on a small screen.

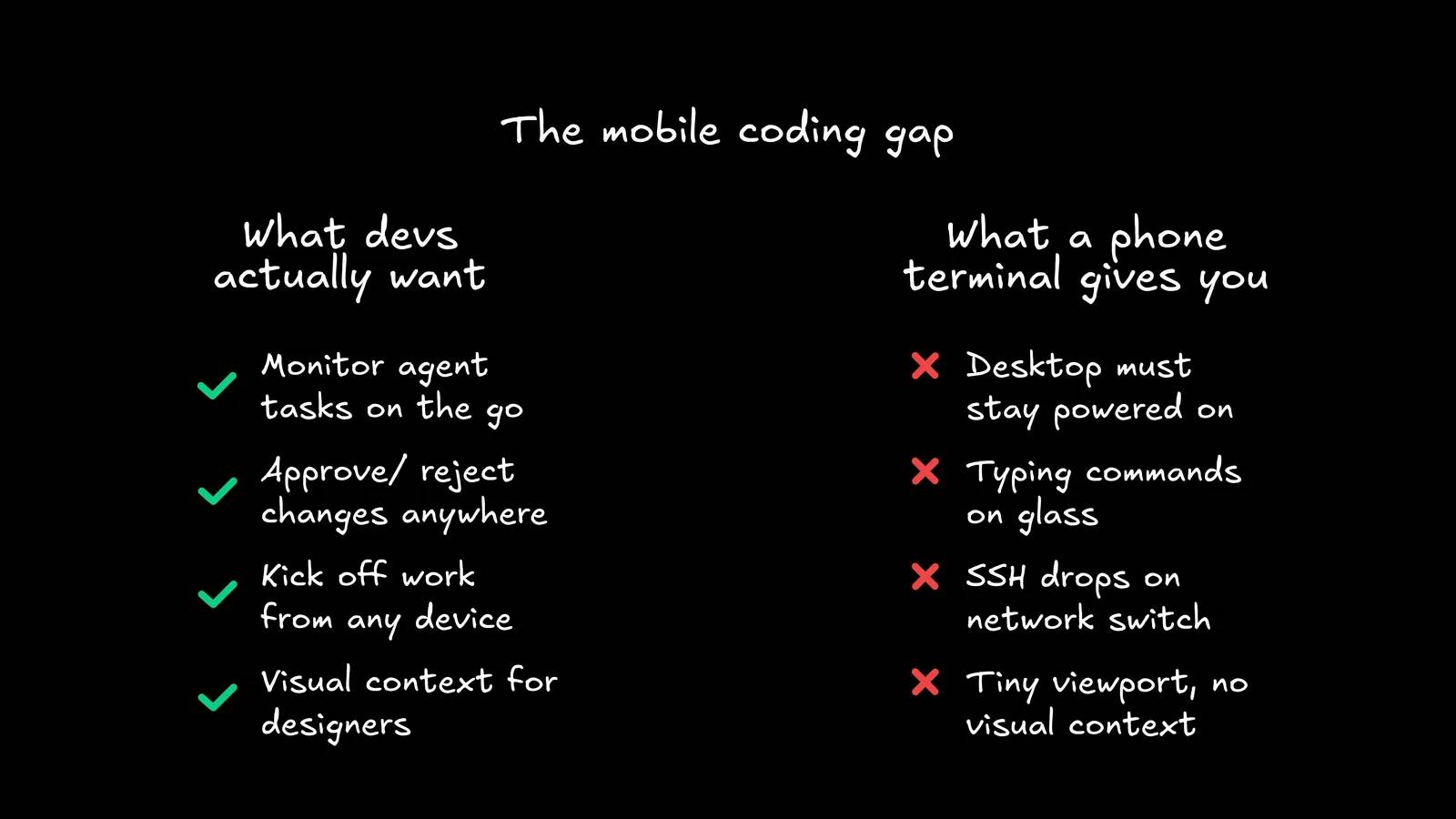

But why did developers want "Claude Code on mobile" in the first place? Not because they liked typing `git push` commands with their thumbs. Nobody woke up dreaming of a 390-pixel-wide command-line interface. When you really consider what people are asking for on X and Reddit, it boils down to four things:

- Test long-running tasks without returning to your desk.

- Approve or reject changes when prompted.

- Start working from anywhere - sudden ideas need to be coded before you finish making your coffee.

- Continue deploying the product without being tied to a workstation.

This isn't a command-line interface issue. This is a dispatch issue.

If you've ever tried running multiple agents, you'll understand the difficulties: context fragmentation, resource conflicts, variations in reproducibility, and the age-old question of "which agent is doing what?". Those problems are already challenging enough on a 27-inch screen. Shrinking the viewport to 6 inches doesn't make them any easier – it just makes them harder to see.

Think of it this way. On a laptop, a terminal-based agent works with limited visibility—you're viewing a stream of text and hoping for a meaningful difference. Now, remove 85% of your screen area, add a virtual keyboard obscuring the remaining half, and try reviewing a 200-line component change while your phone automatically corrects `useState` to "Use State".

This isn't a minor downgrade. It's a completely different (and worse) model of interaction.

The terminal on your phone allows you to directly access an agent. But it doesn't provide you with context. You can't see the visual differences, you can't examine a component in parallel with its design specifications, and you can't get an overview of the three agents running in parallel.

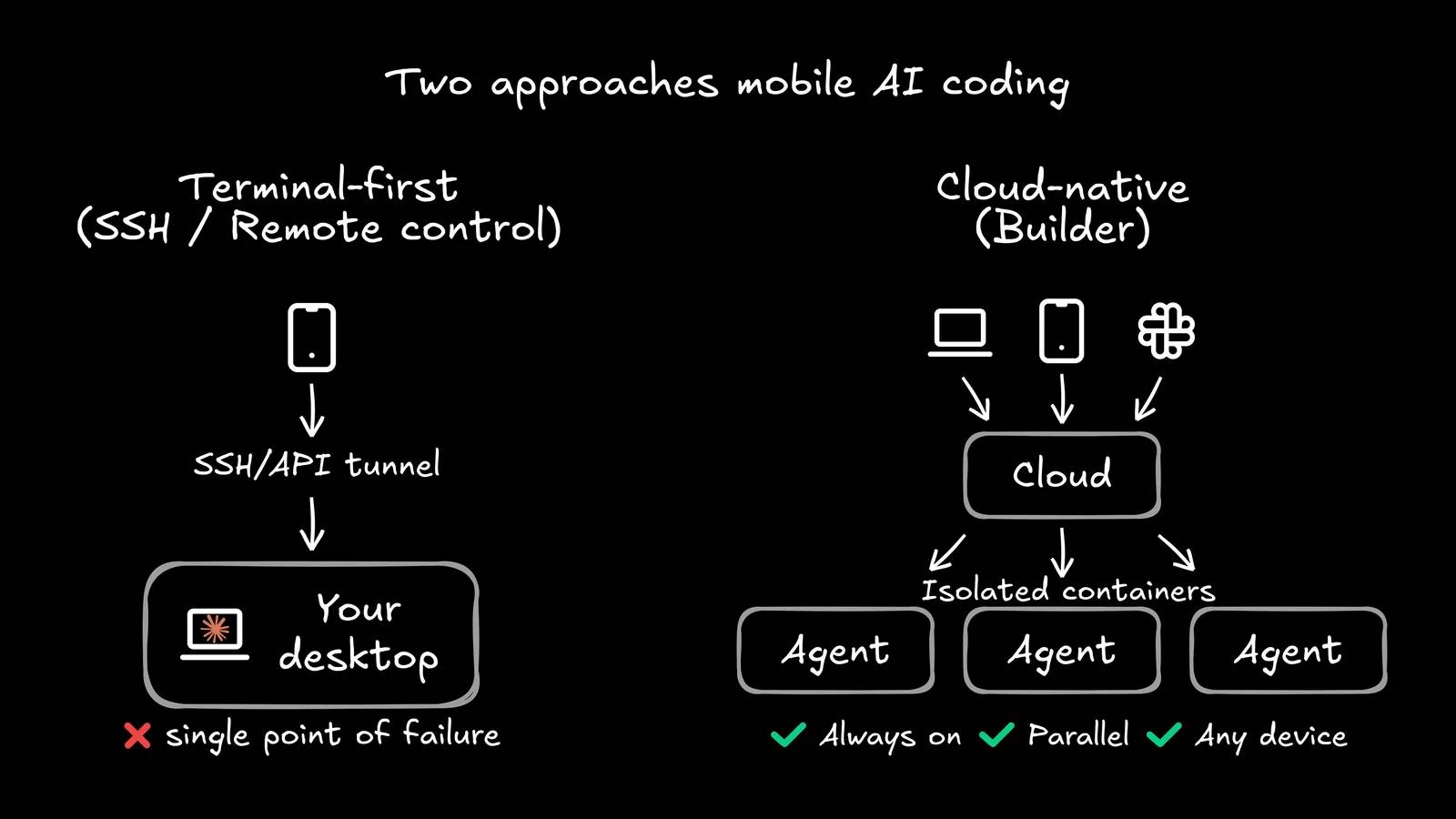

Another approach: Cloud-based agents

What happens if the agent isn't tied to your laptop?

That's the premise behind Builder . Instead of running agents locally and then connecting to them from your phone, Builder executes in the cloud by default. Agents run in an isolated container—not your localhost—which completely changes how calculations are performed:

- Battery life is not a factor . Your laptop may be in sleep mode, updating, or even burned out. The agent will continue to run.

- No port conflicts . Each run has its own clean environment. No more zombie processes vying for port 3000.

- Activate from anywhere . Launch the agent from Slack, Jira, Linear, or your phone notification. The interface adapts to your needs anytime, anywhere.

- Visual context . Instead of a terminal thread, you have a unified interface—design, source code, agent chat, and Git comparisons all in one place. You can actually see what the agent has built.

The key change here is in the architecture. MCP standardizes the tool layer, so your agents use the same language whether triggered from a browser, Slack, or push notifications. And because everything runs in the cloud, the experience isn't degraded by your device. Your phone becomes the control center, not a mini-laptop.

This is also important for teams. When agents run locally, orchestration is a personal activity: one developer, one machine, one session. When they run in the cloud with a shared interface, your entire team—project managers, designers, developers—can prototype, review, and iterate using a shared visual canvas connected to your actual codebase.

A project manager can approve layouts from their phone. A designer can report spacing errors without duplicating the repository. A lead engineer can review three parallel task runs from a single console. That workflow is scalable. But the terminal on your phone isn't.

And for developers concerned with releasing code that truly respects the source code, cloud execution means every run starts from the same reproducible benchmark. No more "it works on my machine" when that "machine" is your phone on a 3G network.

The terminal is just the starting point, not the destination.

Using Claude Code on a phone is really cool. It shows that developers are craving mobility in their AI workflows, and the community's creativity in making that a reality – from SSH tricks to Railway implementations to Anthropic's official Remote Control – is impressive.

But the answer to the question "how to use AI agents on the go?" isn't about shrinking your terminal, but rather completely rethinking where that agent operates.

Cloud-based agents, running independently of local computers, are accessible from any device via an intuitive, contextually relevant interface. That's the real key. It's not about smaller screens, but about a larger architecture.

The era of AI agents tied to a desk is coming to an end. The question is whether you'll free them with SSH, or with something built for cloud computing from the start.

Discover more

Claude Claude CodeShare by

David PacYou should read it

- Which AI programming tool is right for you: Claude Code or Cursor?

- Learn about Claude Opus 4.6

- What is Claude Skills?

- Instructions for installing Claude Code on Windows 11

- Claude API: How to get the key and use the API

- The Quiet Details That Make a Sports Betting Platform Feel Reliable

- Instructions on creating toy set images with ChatGPT AI

- How are AI agents changing the journalism industry?

- Tips for quickly creating automated tests on Gemini

- PixAI - The ultimate AI anime creator

- Instructions on how to add and use OpenAI Nodes in n8n