Perceptron in Machine Learning

A perceptron is a type of artificial neuron. It is the simplest possible neural network. Neural networks are the foundation of machine learning.

A perceptron is a type of artificial neuron. It is the simplest possible neural network. Neural networks are the foundation of machine learning .

Frank Rosenblatt

Frank Rosenblatt (1928–1971) was a renowned American psychologist in the field of artificial intelligence . In 1957, he initiated something truly groundbreaking: the "invention" of the Perceptron program on the IBM 704 computer at Cornell Aeronautical Laboratory.

Scientists have discovered that brain cells (neurons) receive input from our senses in the form of electrical signals. Neurons, in turn, use these electrical signals to store information and make decisions based on that previous input.

Frank had the idea that the Perceptron could mimic the principles of the brain, with the ability to learn and make decisions.

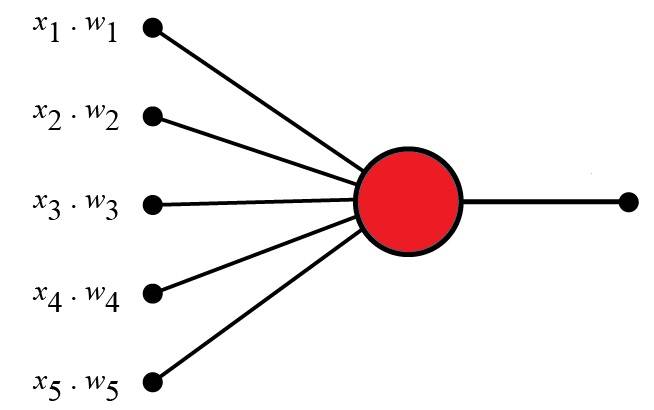

Perceptron

The original perceptron was designed to take some binary input and produce a binary output (0 or 1).

The idea is to use different weights to indicate the importance of each input factor, and the sum of these values must exceed a threshold value before a yes or no (true or false) decision is made (0 or 1).

Examples of Perceptrons

Think about neural networks (in your brain). Neural networks try to decide whether or not you should go to the concert.

Is the artist talented? Is the weather good? How much weight should these factors be given to each other?

| Criteria | Input | Weight |

|---|---|---|

| Good artist | x1 = 0 or 1 | w1 = 0.7 |

| Good weather | x2 = 0 or 1 | w2 = 0.6 |

| I have a friend with me. | x3 = 0 or 1 | w3 = 0.5 |

| Food is served. | x4 = 0 or 1 | w4 = 0.3 |

| Alcoholic beverages are served. | x5 = 0 or 1 | w5 = 0.4 |

Perceptron algorithm

Frank Rosenblatt proposed this algorithm:

- Set threshold value

- Multiply all inputs by their weights.

- Add all the results

- Activate output

1. Set the threshold value:

- Threshold = 1.5

2. Multiply all inputs by their weights:

- x1 * w1 = 1 * 0.7 = 0.7

- x2 * w2 = 0 * 0.6 = 0

- x3 * w3 = 1 * 0.5 = 0.5

- x4 * w4 = 0 * 0.3 = 0

- x5 * w5 = 1 * 0.4 = 0.4

3. Add up all the results:

- 0.7 + 0 + 0.5 + 0 + 0.4 = 1.6 (Weighted sum)

4. Activate the output:

Returns true if the sum is greater than 1.5 ("Yes, I will go to the concert").

Note:

- A weather weight of 0.6 might be ideal for you, but it could be different for someone else. A higher value means the weather is more important to them.

- The threshold value is 1.5 for you, but it may be different for someone else. A lower threshold means they are more inclined to go to concerts.

For example

const threshold = 1.5; const inputs = [1, 0, 1, 0, 1]; const weights = [0.7, 0.6, 0.5, 0.3, 0.4]; let sum = 0; for (let i = 0; i < inputs.length; i++) { sum += inputs[i] * weights[i]; } const activate = (sum > 1.5);Perceptrons in Artificial Intelligence (AI)

- The perceptron is an artificial neuron.

- It is inspired by the function of a biological neuron.

- It plays a crucial role in Artificial Intelligence.

- It is a crucial building block in neural networks.

To understand the theory behind it, we can analyze its components:

- Perceptron input (nodes)

- Node value (1, 0, 1, 0, 1)

- Node weights (0.7, 0.6, 0.5, 0.3, 0.4)

- Total

- Threshold value

- Activation function

- Sum (sum > treshold)

1. Perceptron input

- A perceptron receives one or more inputs.

- The inputs to a perceptron are called nodes.

- Nodes have both values and weights.

2. Node value (input value)

- The input nodes have binary values of either 1 or 0.

- This can be interpreted as true or false / yes or no.

- The values are: 1, 0, 1, 0, 1

3. Node Weights

- Weights are values assigned to each input.

- The weights represent the strength of each node.

- A higher value means that the input has a stronger influence on the output.

- The weights are: 0.7, 0.6, 0.5, 0.3, 0.4

4. Total

- Perceptron networks compute weighted sums of inputs.

- It multiplies each input by its corresponding weight and sums the results.

- The total is: 0.7*1 + 0.6*0 + 0.5*1 + 0.3*0 + 0.4*1 = 1.6

5. Threshold

- The threshold is the value required for the perceptron to activate (output 1); otherwise, it will not function (output 0).

- In this example, the threshold value is: 1.5

6. Activation function

- After addition, the perceptron applies the activation function.

- The goal is to introduce nonlinearity into the output. It determines whether the perceptron should be activated based on the synthesized input.

- The activation function is very simple:

(sum > treshold) == (1.6 > 1.5)

Output

The final output of the perceptron is the result of the trigger function.

It represents the perceptron's decision or prediction based on the input and weights.

The activation function maps the weighted sum to a binary value.

The binary values 1 or 0 can be interpreted as true or false / yes or no.

The output is 1 because: (sum > treshold) == true.

Learn Perceptron

Perceptrons can learn from examples through a process called training.

During training, the perceptron adjusts its weights based on observed errors. This is typically done using a learning algorithm such as a perceptron learning rule or a backpropagation algorithm.

The learning process provides the perceptron with labeled examples where the desired output is already known. The perceptron compares its output to the desired output and adjusts its weights accordingly, aiming to minimize the error between the predicted and desired outputs.

The learning process allows the perceptron to learn weights that enable it to make accurate predictions for new, unknown inputs.

Note

Clearly, a decision CANNOT be made by a single neuron alone.

Other neurons must provide additional input:

- Is the artist talented?

- Is the weather good?

- .

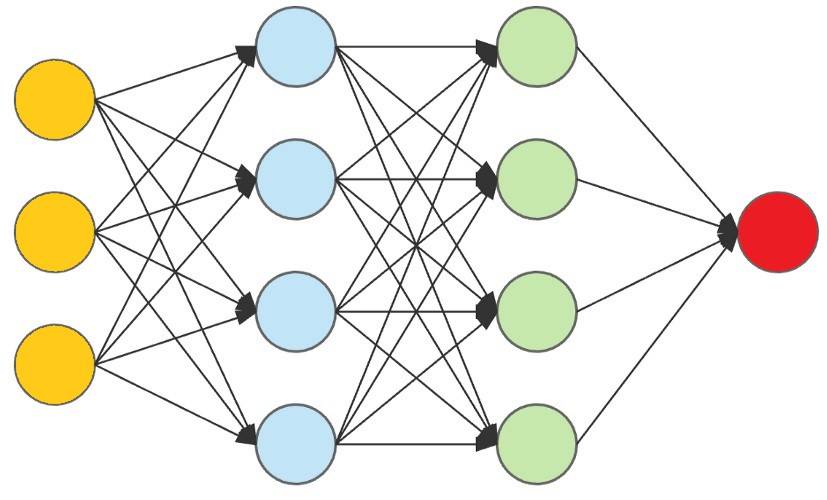

Multilayer perceptrons can be used to make more complex decisions.

It is important to note that while perceptrons have had a significant impact on the development of artificial neural networks, they are limited to learning linearly separable patterns.

However, by stacking multiple perceptrons together in layers and incorporating nonlinear activation functions, neural networks can overcome this limitation and learn more complex patterns.

Neural network

The perceptron defines the first step in neural networks:

Perceptrons are often used as building blocks for more complex neural networks, such as multilayer perceptrons (MLPs) or deep neural networks (DNNs).

By combining multiple perceptrons into layers and connecting them in a network structure, these models can learn and represent complex patterns and relationships in data, enabling tasks such as image recognition, natural language processing, and decision-making.

Discover more

Perceptron Machine Learning Perceptron in Machine LearningShare by

Micah SotoYou should read it

- 7 practical applications of Machine Learning

- The difference between AI, machine learning and deep learning

- 7 best websites to help kids learn about AI and Machine Learning

- Google researchers for gaming AI to improve enhanced learning ability

- Google released the TensorFlow machine learning framework specifically for graphical data

- Why Real-Time Visibility is the New Standard for Modern Business Logistics

- 5 programming tasks that ChatGPT still can't do.

- 7 tips for using ChatGPT to automate data tasks.

- Create prompts based on inference sequences.

- Instructions on creating multiple-choice questions on Gemini

- How do you summarize content directly using Notion AI?