What is LM Studio? What are its applications?

LM Studio is a software platform that allows users to run AI language models locally on their computers.

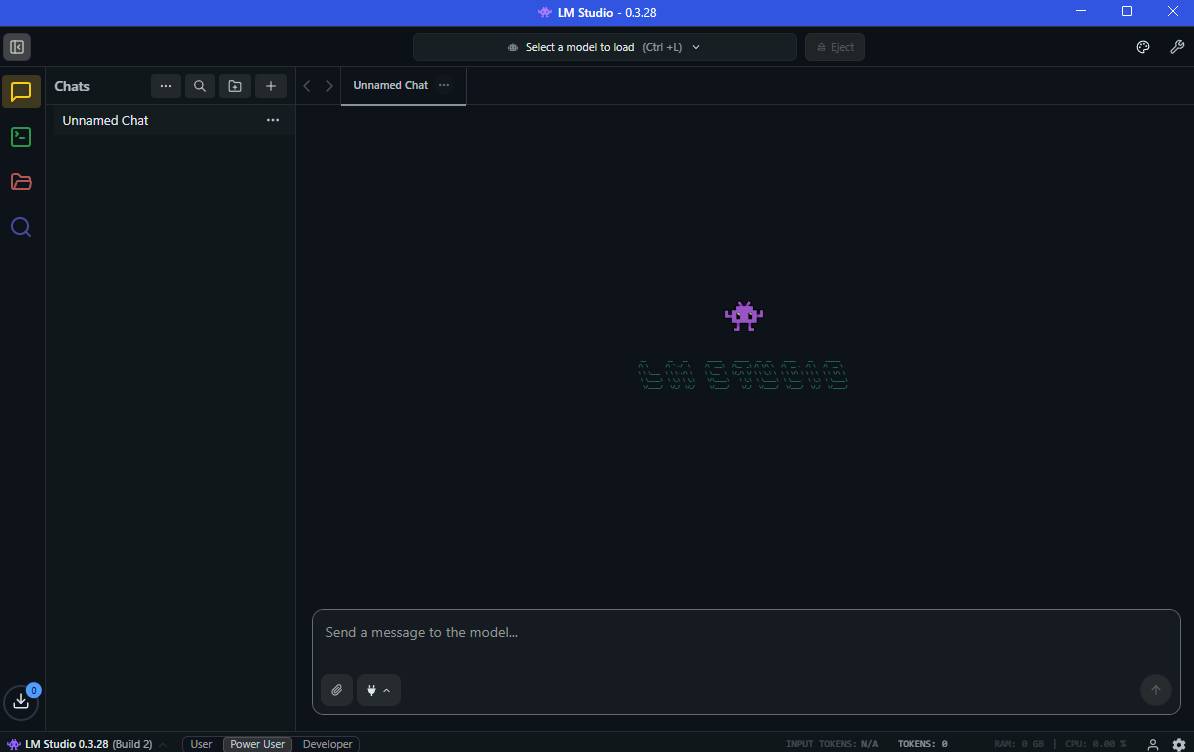

If you're interested in running AI models locally, you might want to learn about LM Studio and its use cases. LM Studio is a desktop application that allows users to run large language models (LLMs) directly on their computers without relying on cloud services.

This tool provides an easy-to-use interface for downloading, managing, and interacting with local AI models. This makes it popular with developers, researchers, and privacy-conscious users who want to experiment with artificial intelligence on their own computers.

What is LM Studio?

LM Studio is a software platform that allows users to run AI language models locally on their computers. Instead of sending commands to online AI services, LM Studio runs the models directly on your system.

It supports many major open-source programming language models and provides tools to interact with them through a graphical interface.

With LM Studio, users can:

- Download AI models

- Run a local AI chat assistant.

- Test the model's statements and responses.

- Develop applications using local AI models.

Because everything runs locally, users retain control over their data.

Key features of LM Studio

LM Studio includes several features designed to make it easier to use local AI.

Key features include:

- Implement local AI models.

- Integrated chat interface for interacting with the model.

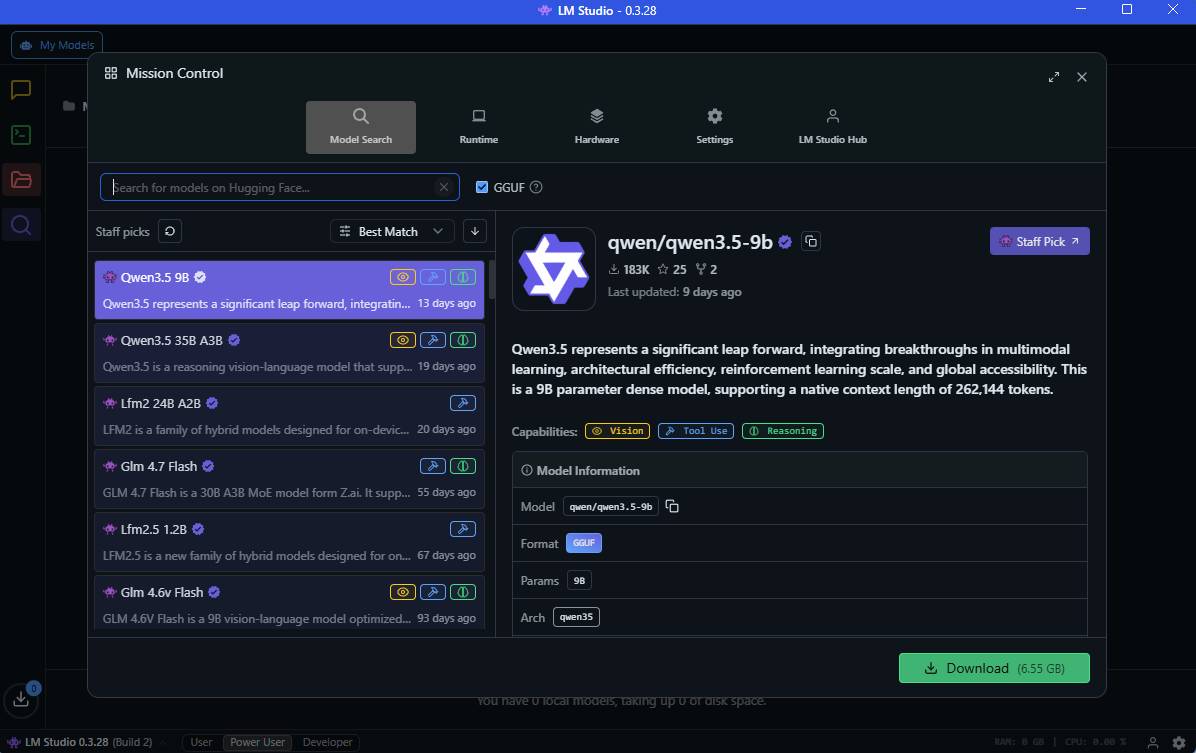

- Model download and management tool

- API support for developers

- Compatible with open-source models

These features make AI testing easier without requiring complex setup.

Why do people use LM Studio?

LM Studio became popular because it simplifies the process of running AI models locally.

Some of the benefits include:

- No internet connection is required after downloading the model.

- Higher security because the data remains on the device.

- There are no API usage fees.

- Easy-to-use interface for testing AI prompts.

This makes LM Studio useful for learning and experimenting.

How LM Studio works

LM Studio works by downloading AI models to your computer and running them locally.

The basic workflow is as follows:

- Install LM Studio on your computer.

- Download a compatible AI model.

- Run the model inside the application.

- Interact with the model through a chat interface or API.

Performance depends on the system's hardware capabilities.

Common use cases for LM Studio

There are many practical use cases for LM Studio in various fields.

- Local AI Chat Assistant : Users can run local AI chatbots to answer questions, generate ideas, or assist with writing.

- Software development : Developers use LM Studio to test statements and integrate AI features into applications via APIs.

- AI focused on privacy : Running AI locally ensures that sensitive data never leaves the user's computer.

- Research and experimentation : Researchers can test different models and command-line techniques without relying on a cloud platform.

- Offline AI tool : LM Studio enables AI interaction even without an internet connection after models are downloaded.

System requirements for LM Studio

Running local AI models requires sufficiently powerful hardware.

Typical requirements include:

- Windows, macOS, or Linux system

- At least 8 GB of RAM (16 GB recommended)

- Modern CPUs or GPUs

- Sufficient storage space for AI models.

Larger models may require more powerful hardware.

Conclusion

Understanding what LM Studio is and its use cases helps users explore local AI technologies without relying on cloud services. By allowing AI models to run directly on personal computers, LM Studio provides a convenient environment for experimentation, development, and privacy-focused AI applications.

As local AI tools continue to evolve, platforms like LM Studio are becoming crucial for developers, researchers, and anyone interested in running artificial intelligence on their own devices.

- How to Install Visual Studio Using Parallels Desktop on a Mac

- How to get started using LM Studio

- Windows App Studio update introduces many new additions

- 6 notable differences between Mac Studio and Mac Pro

- Roblox Studio

- What is LM Studio?

- Overview of Copilot Studio

- The main difference between Ollama and LM Studio

- What is TikTok Live Studio? Instructions for using TikTok Live Studio

- Dell is about to release Studio XPS 15 and 17 inches

- How to install OBS Studio in Ubuntu

- Visual Studio 2015 is about to be discontinued, what should you pay attention to?

- TikTok Studio

- Shortcuts in Camtasia Studio