- Quick comparison: Key differences between Ollama and LM Studio

- Learn about Ollama: Precision and speed on the command line.

- Discover LM Studio: Local Artificial Intelligence Simplified Visually

- Model compatibility and accessibility

- Performance and Optimization

- Developer customization and control capabilities

- Conclusion: Should you choose Ollama or LM Studio?

Artificial intelligence (AI) is rapidly shifting towards local processing, a trend driven by privacy concerns, faster inference speeds, and independence from cloud-based APIs. Within this wave of decentralized AI, two platforms have garnered significant community attention: Ollama and LM Studio .

Both tools make it easy to run large language models (LLMs) directly from a personal computer, but they have different approaches. One is minimalist, developer-focused, and command-line controlled, while the other is smooth, beginner-friendly, and based on a graphical user interface (GUI).

If you're wondering which to choose, this detailed comparison between Ollama and LM Studio will give you a clear answer, based on real-world experience.

Quick comparison: Key differences between Ollama and LM Studio

| Features | Ollama | LM Studio |

| User interface | Command-line interface (CLI) | Graphical User Interface (GUI) |

| Ease of use | Ideal for developers | Suitable for beginners |

| Setup & Installation | Request Terminal commands | Install a plug-and-play desktop |

| Customize | Advanced configuration support | Focus moderately on chat functionality and local model loading. |

| Access the model | Use a private registration system (ollama pull). | Connect directly to Hugging Face |

| Efficiency | Faster and more resource-efficient. | It's slightly heavier, but the visuals are smoother. |

| Integration | Easily integrated into applications and processes. | This is limited to using only local graphical user interfaces (GUIs) or APIs. |

| Open source | It is completely open source and developed by the community. | Exclusively owned but free. |

| Platform support | macOS, Linux, Windows (preview) | macOS, Windows, Linux (beta) |

| Suitable for | AI developers, researchers, and engineers | Educators, students, creators, testers |

Learn about Ollama: Precision and speed on the command line.

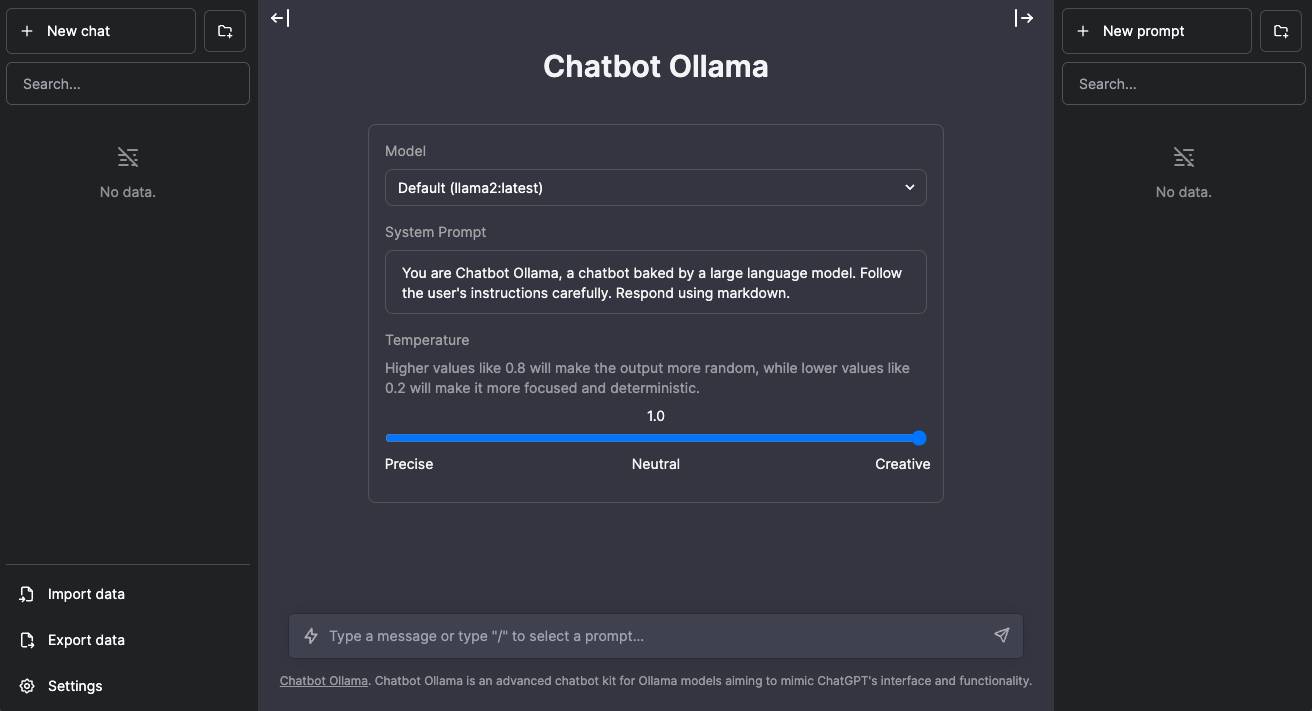

Ollama is a local LLM runner that has become incredibly popular with developers who value precision, flexibility, and speed. Ollama runs on a command-line interface (CLI), making it lightweight and easy to program.

Unlike typical AI chat tools that rely on cloud connectivity, Ollama allows you to run advanced models like LLaMA 2, Mistral, or Codellama directly on your device. It is completely open source and built on the llama.cpp backend, ensuring efficient inference even on standard hardware.

What truly sets Ollama apart is its Modelfile, a configuration file that defines how the model behaves. Developers can create variations, assign system prompts, or fine-tune personality settings. Think of it as the "Dockerfile" of local LLMs.

Ollama's main strengths include:

- Local-first architecture : Keep all data on your machine, enhancing privacy.

- Quick commands : The model can be downloaded and run immediately using the CLI.

- Flexible integration : Suitable for embedding into custom applications or backend workflows.

- Performance optimization : Uses less memory and has a faster response time.

- Community-based updates : Its open-source nature encourages transparency and innovation.

However, for non-developers, the lack of a graphical user interface (GUI) can be a drawback. Ollama's strengths lie in its control and performance, rather than its detailed instructions, and that's why developers love it.

Discover LM Studio: Local Artificial Intelligence Simplified Visually

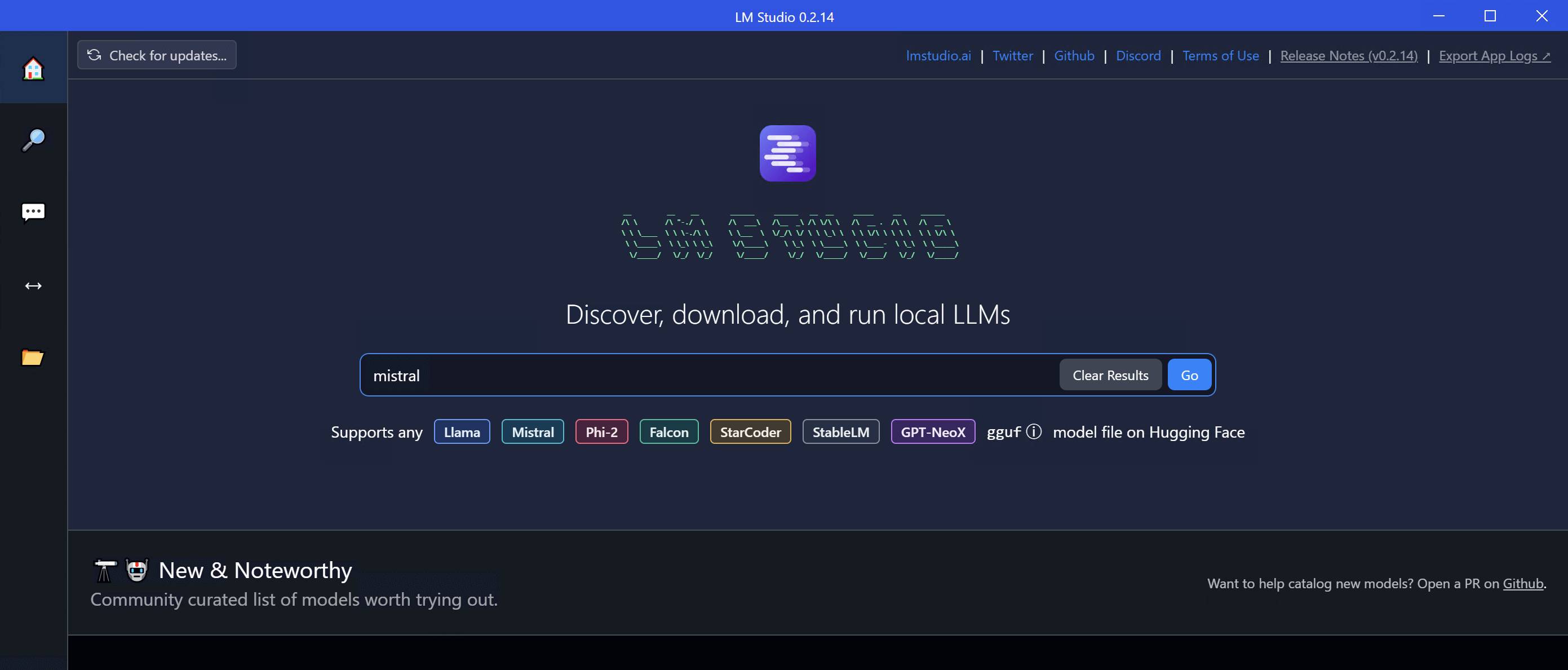

On the other hand, LM Studio focuses on accessibility and user experience. This tool is specifically designed as a desktop application to run large language models smoothly.

The graphical user interface it provides is similar to ChatGPT , offering a clean chat window that allows you to load models, experiment with different prompts, and analyze feedback in real time. This interface immediately eliminates the need for command-line operations, providing a suitable system for users who simply want to experiment without requiring technical setup.

LM Studio seamlessly integrates with Hugging Face, allowing you to browse and download models like Mistral, Llama , or Vicuna directly within the app. It also runs a local server compatible with OpenAI, meaning developers can integrate it into existing applications using the same API architecture as OpenAI but without needing to send data to the cloud.

The appealing features of LM Studio:

- Instant setup : Download, install, and chat with the model in just minutes.

- Intuitive and appealing interface : The graphical user interface (GUI) is easy to use for non-technical users.

- Integrated with Hugging Face : Access thousands of community-trained models.

- Offline capability : Conduct conversations without relying on an internet connection.

- API flexibility : For developers who want the freedom to integrate.

The main limitation is that it doesn't offer as much control or customization at the model level as Ollama. It's more of an interface to explore than a platform for design.

Model compatibility and accessibility

Both platforms are based on similar backends (llama.cpp), but they differ in how users access and manage the model.

- Ollama : Maintains a curated model repository with optimized LLama, Mistral, and Codellama builds. You can load models directly using the `ollama pull` command.

- LM Studio : Offers broader exploration capabilities through Hugging Face integration, providing access to hundreds of community-contributed models in GGUF format.

Conclusion : LM Studio wins in terms of versatility, but Ollama offers better optimization and faster boot times.

Performance and Optimization

When comparing the performance of Ollama and LM Studio, the results often depend on system resources and the user's intended use.

Ollama runs efficient models at minimal cost, thanks to its CLI architecture and intelligent memory management. It's ideal for repetitive tasks, automation, or embedded use in backend systems.

LM Studio offers stable, smooth performance but requires more GPU and RAM to handle the graphical interface. It's better suited for interactive sessions than high-throughput inference. Performance tests from the developer community show that Ollama consistently loads and responds faster, especially on Linux systems.

Developer customization and control capabilities

If you enjoy tinkering, Ollama provides the tools. With Modelfile, developers can define system prompts, adjust token limits, or build model chains. You can even automate how LLMs interact with external data sources.

Meanwhile, LM Studio offers a simpler configuration menu for temperature, token length, and system prompt, but lacks deeper control or scripting options.

Conclusion : Ollama excels in terms of customization and flexibility for developers.

Conclusion: Should you choose Ollama or LM Studio?

Ultimately, choosing between Ollama and LM Studio depends on what you value most: control or convenience.

No matter what field you work in—engineering research, web development, or just using technology—everyone needs flexibility, and with that in mind, Ollama is always the clear-win. It offers you the ability to quickly and easily integrate open-source tools into a complex work environment. You can program your work, scale it, and even customize it to your needs.

For local AI exploration, those who want an intuitive, clean interface and chat-style interface will find LM Studio unrivaled in simplicity. It's like having your own offline ChatGPT without worrying about privacy or registration.

Both are impressive, but they serve different ways of thinking.