Why do many people stop using ChatGPT permanently?

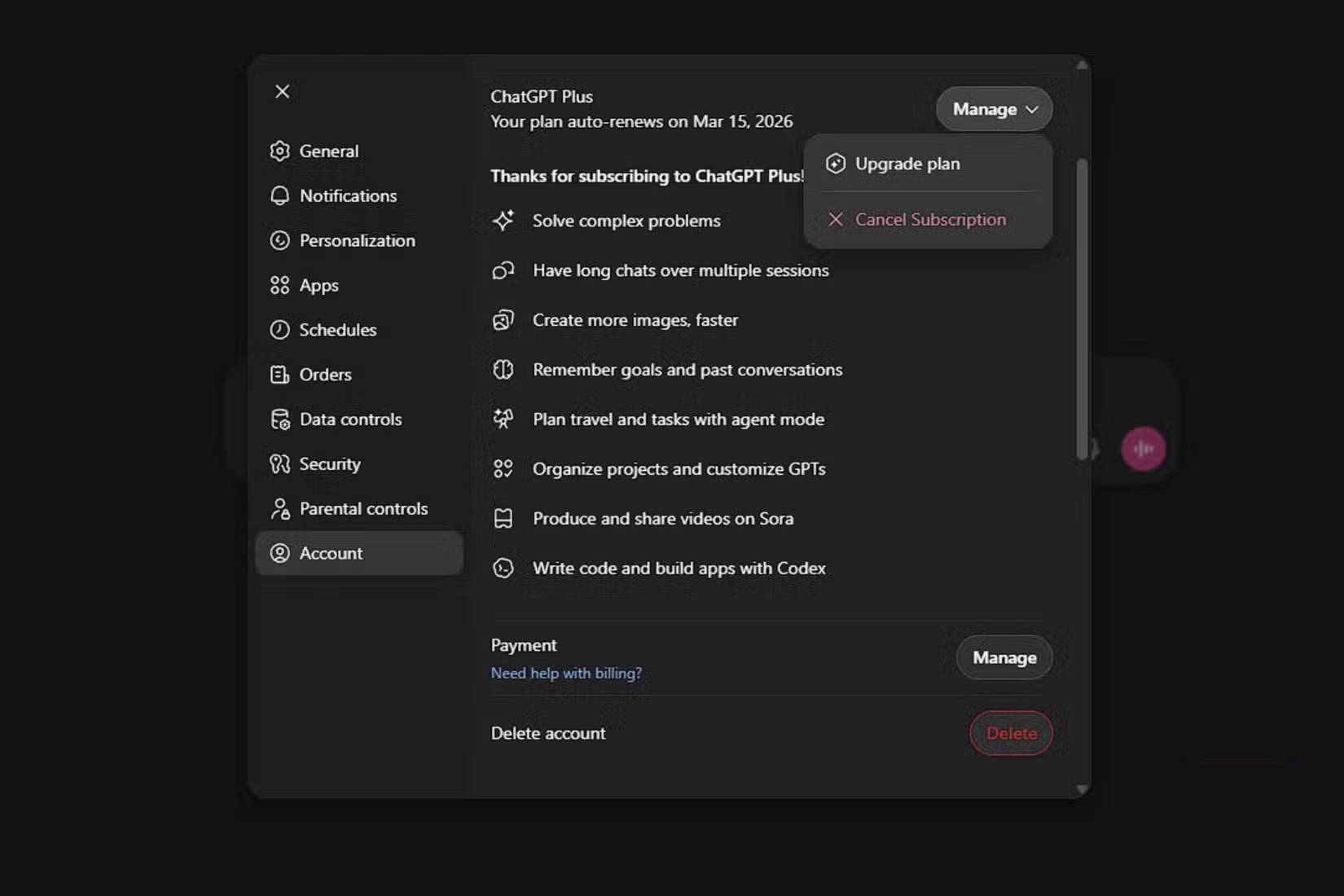

There is currently much discussion surrounding the Cancel ChatGPT movement – a movement aimed at persuading people to cancel their OpenAI subscriptions or stop using ChatGPT altogether.

There is currently much discussion surrounding the "Cancel ChatGPT" movement – a campaign to persuade people to cancel their OpenAI subscriptions or stop using ChatGPT altogether. This effort, documented by users on the Reddit forum r/ChatGPT, comes as OpenAI reached an agreement with the US Department of Defense to deploy AI models in classified government networks. The controversy lies not in the agreement itself, but in how it was formed. Anthropic had previously signed the contract with the Department of Defense, but the US government objected to the company's safeguards regarding the use of AI for large-scale domestic surveillance or fully autonomous weapons systems.

The Trump administration classified Anthropic as a supply chain risk, forcing government agencies and contractors to begin phasing out the use of the company's models. Shortly afterward, OpenAI signed a separate agreement with the Department of Defense, leading many to question whether they accepted the government's terms allowing the use of AI for "all legitimate purposes." OpenAI and its CEO, Sam Altman, have since attempted to mitigate the damage, stating they will amend the agreement with the US government to include clear restrictions on the use of AI for these purposes.

We may never know the exact terms of OpenAI's deal with the U.S. government, but the picture is clear. It certainly looks like the company was quick to seize the opportunity when Anthropic put ethical principles first to secure a valuable contract. That's a good reason to abandon ChatGPT, and there's no problem with doing so.

Everyone is joining the "Cancel ChatGPT" movement.

Anthropic, the company behind Claude, was the first pioneering AI firm to deploy its models in classified networks. In theory, this gave them an advantage over competitors, but things became tense when the U.S. government demanded the removal of all safeguards. The administration wanted all AI contracts to allow systems to be used for "all legitimate purposes," and required Anthropic to remove safeguards against using Claude for large-scale domestic surveillance and fully autonomous weapons systems. This is how Anthropic explained the situation in a blog post:

Anthropic understands that the Department of Defence, not private companies, makes military decisions. We have never opposed specific military operations nor have we attempted to arbitrarily restrict the use of our technology.

However, in certain circumstances, we believe that artificial intelligence (AI) can undermine, rather than protect, democratic values. Some applications also fall outside the scope of what current technology can safely and reliably accomplish.

In response, the U.S. government threatened to take the unprecedented step of labeling a domestic company as a "supply chain risk." In a counter-move, they threatened to use the Defense Production Act to force Anthropic to remove its rigid barriers. Anthropic's position became clear: "Whatever happens, these threats do not change our position: We cannot, in a clear conscience, accept their demands."

This was even more shocking when OpenAI announced it had reached an agreement with the Department of Defense just two days later, raising a host of questions. Would the company behind ChatGPT yield to the government's demands? The answer seems to be yes.

OpenAI and Altman are currently conducting a damage control campaign, and they are using appropriate language to make people think they included similar safeguards that Anthropic required. This is illogical from a rational standpoint – why would the U.S. government publicly terminate its partnership with Anthropic, a company deeply embedded in the U.S. government system, if it wasn't serious about removing these safeguards? This also seems untrue, as government officials, including Senior Official Jeremy Lewin, have confirmed that OpenAI's contract "originated from the 'all fair uses' criterion . "

Altman's public statements don't quite match what's supposedly going on behind the scenes – CNBC reported that OpenAI's CEO told his employees the company wasn't "given operational say" on how the Department of Defense uses its AI models.

It's easy to say goodbye when the competition is better.

Is ChatGPT really better than Claude or Gemini currently?

Regardless of your opinion on the US government's use of AI for defense purposes, OpenAI's handling of this situation warrants reconsideration. This is the latest in a series of ethical issues plagging the company. Previously, according to a report from Platformer, OpenAI disbanded its "mission adjustment team." Even before that, OpenAI transformed a non-profit into a for-profit entity, blurring the lines between its original mission of developing generalized artificial intelligence (AGI) safely for everyone and its new goal of making a profit. With a technology as controversial as AI, it's difficult to continue supporting a company whose values are clearly questionable.

The "Cancel ChatGPT" or "QuitGPT" trend is driving users to switch to Anthropic's Claude and other competitors, and it's easy to see why. Frankly, there's no reason to continue using OpenAI's products anymore, and the company has fallen behind quite a bit. Look at Arena – a leading AI model performance comparison website – and you'll see that OpenAI's first model only ranked 6th in text processing and programming performance tests. It consistently lagged behind models developed by Anthropic, Google, and xAI. In image recognition performance, OpenAI ranked 4th. In document processing performance tests, OpenAI ranked 10th.

OpenAI's ChatGPT Image performed best among its models, taking the top spot in Arena's image editing performance test. However, it was only 10 points ahead of Gemini 3 Pro Image. Simply put, if you want the latest and best AI models, you should look for alternatives other than ChatGPT. Anthropic, Google, and xAI have not only caught up but surpassed OpenAI's models in recent months and years. Now is the best time to discard ChatGPT entirely as competitors offer better ethics and performance.

- Stop opening ChatGPT directly in your browser: Use this shortcut instead!

- Don't use ChatGPT to do these 11 things!

- Learn about ChatGPT-4o: Features, benefits and how to use it

- Why do many people regret ignoring this ChatGPT feature for so long?

- Does ChatGPT Really Make Us 'Lazier' and 'Less Intelligent'?

- What is ChatGPT Pro? Is it worth it in 2026?

- Instructions for converting ChatGPT-5 to ChatGPT-4o

- Which is better, DeepSeek or ChatGPT?

- Is ChatGPT accessible with a VPN?

- ChatGPT Atlas

- ChatGPT's new Study mode makes studying easier

- 10 Essential Chrome Extensions to Use ChatGPT

- How to use ChatGPT to write Excel formulas

- 3 reasons to give up ChatGPT to switch to Claude