Which is better for building simulation software: Claude, ChatGPT, or Gemini?

Regardless of which setup you're using, it's clear that which model you're employing plays a crucial role.

LLMs are developing at an alarming rate, and depending on one's perspective, that can be good or bad. AI agents are the current trend, but ultimately any agent is limited by the model powering it. So, regardless of the setup you're using, it's clear that the model you use plays a crucial role.

Everyone has heard a lot about Claude. It seems to have always been the top choice for people who genuinely want to get things done – beyond turning photos into cartoons or pouring out their hearts to a chatbot. But many people signed up for ChatGPT when it launched and never had the courage to cancel. Plus, some people have a Google One subscription that comes with Gemini. Paying for a third LLM seems… luxurious.

So what better way to compare the most popular tools used in solar system simulation software?

Let's build a tool to explore the solar system!

A 3D solar system simulation software would force the LLM to handle physics, graphics, simulation logic, user experience, and architecture simultaneously. The project would be web-based, self-contained in a single file, and without specifying a stack. If you want to force the use of Babylon.js instead of Three.js to make things more interesting, choosing the stack would be part of the challenge. You could also add a rule: No retrying, no editing, no patching. The first result will be the final result.

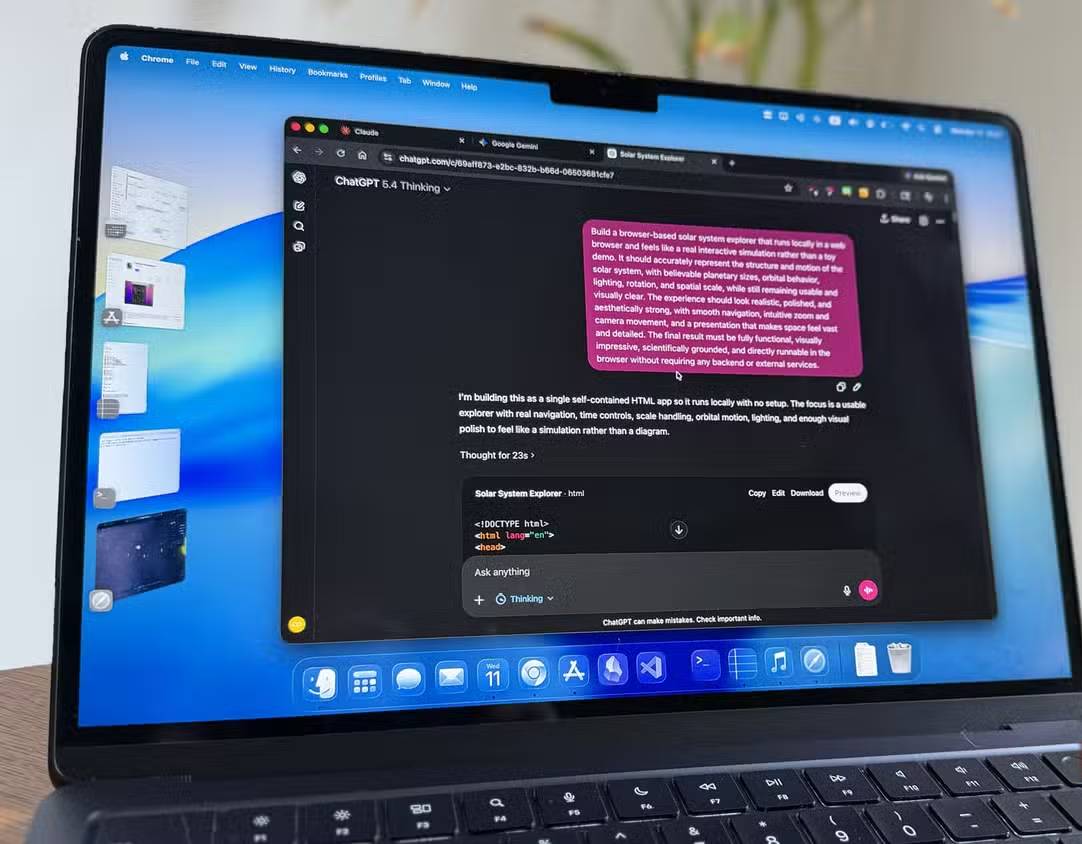

Below is the prompt used for all three tools. Try to keep it as close to visual programming as possible and avoid introducing any rigid technical constraints. The prompt focuses almost entirely on form and function. Run all three in their main web chat interface, not desktop applications or specialized programming tools.

Build a browser-based solar system exploration tool that runs locally in a web browser and feels like a true interactive simulation, not a toy demo. It must accurately represent the structure and motion of the solar system, with reliable planetary sizes, orbital behavior, lighting, rotation, and spatial proportions, while remaining user-friendly and intuitively clear. The experience should look realistic, polished, and aesthetically pleasing, with smooth navigation, intuitive zoom and camera movement, and a presentation that makes space feel vast and detailed. The final result must be fully functional, visually impressive, scientifically sound, and capable of running directly in a browser without any backend or external services.

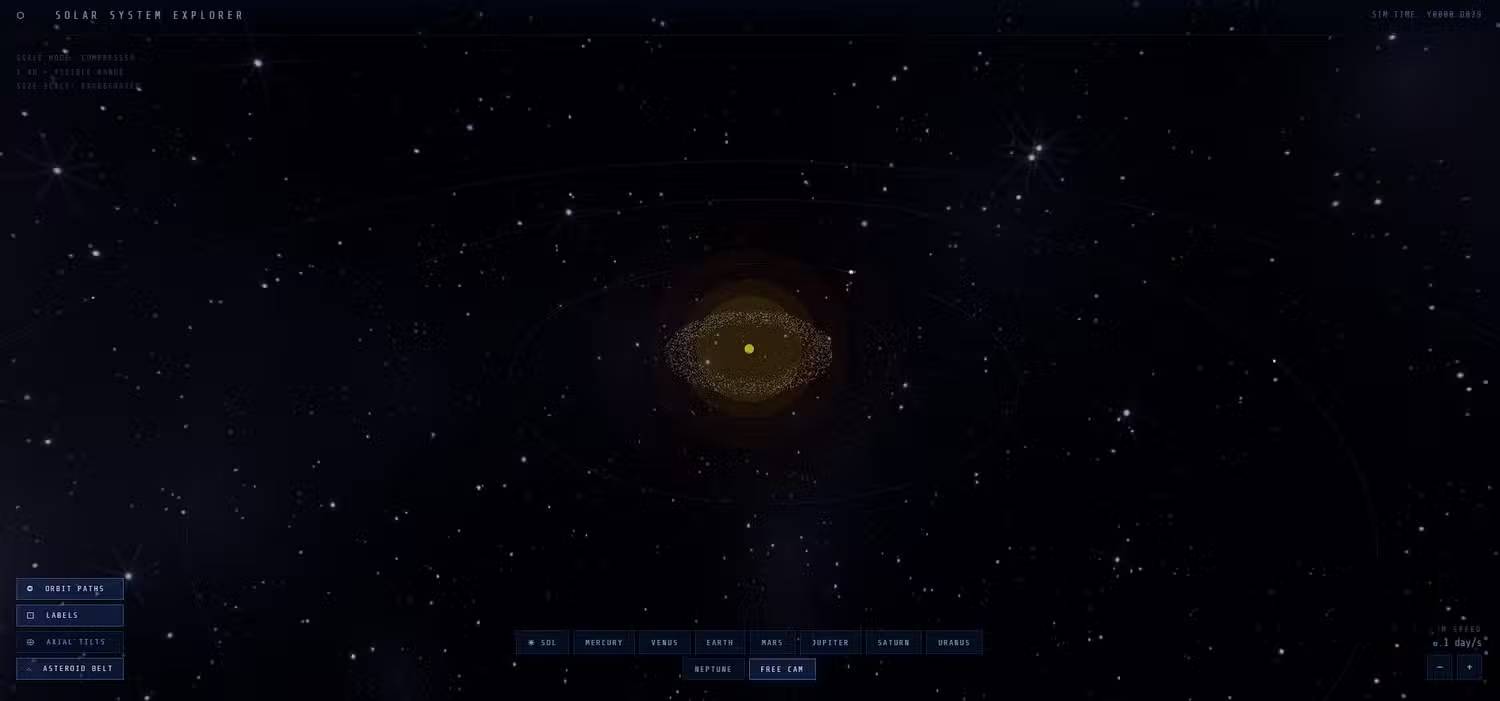

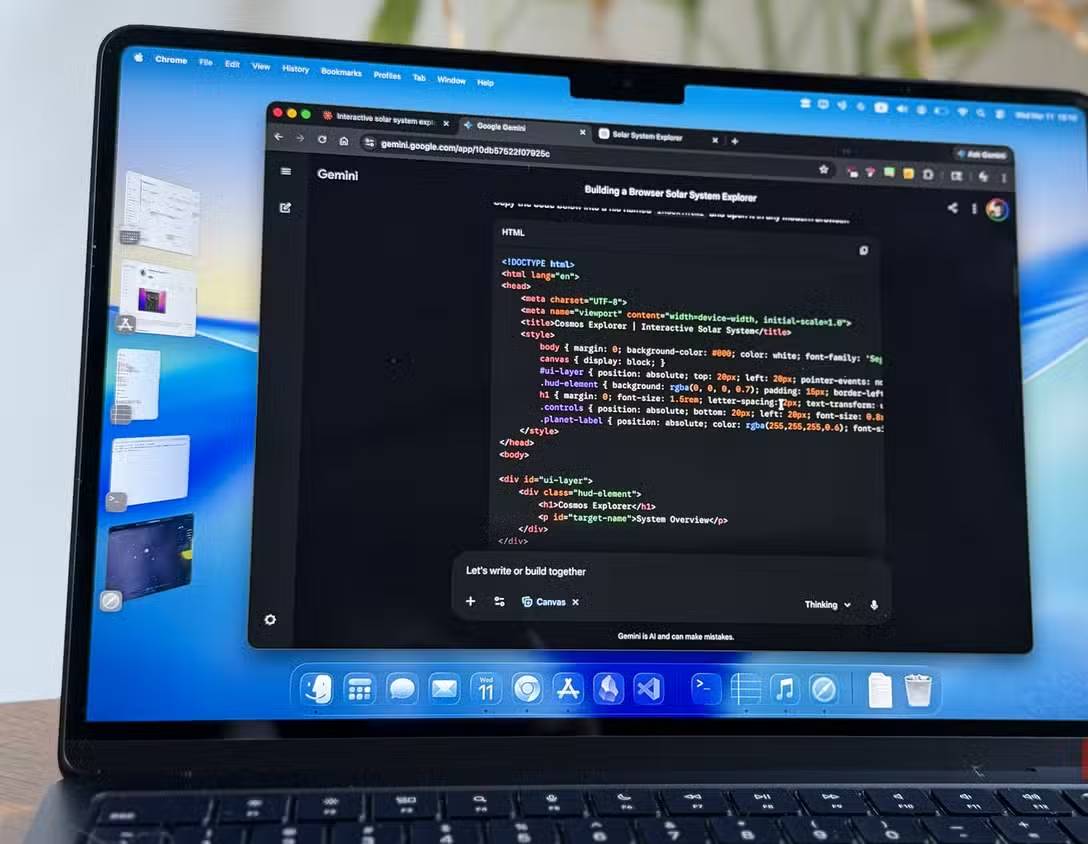

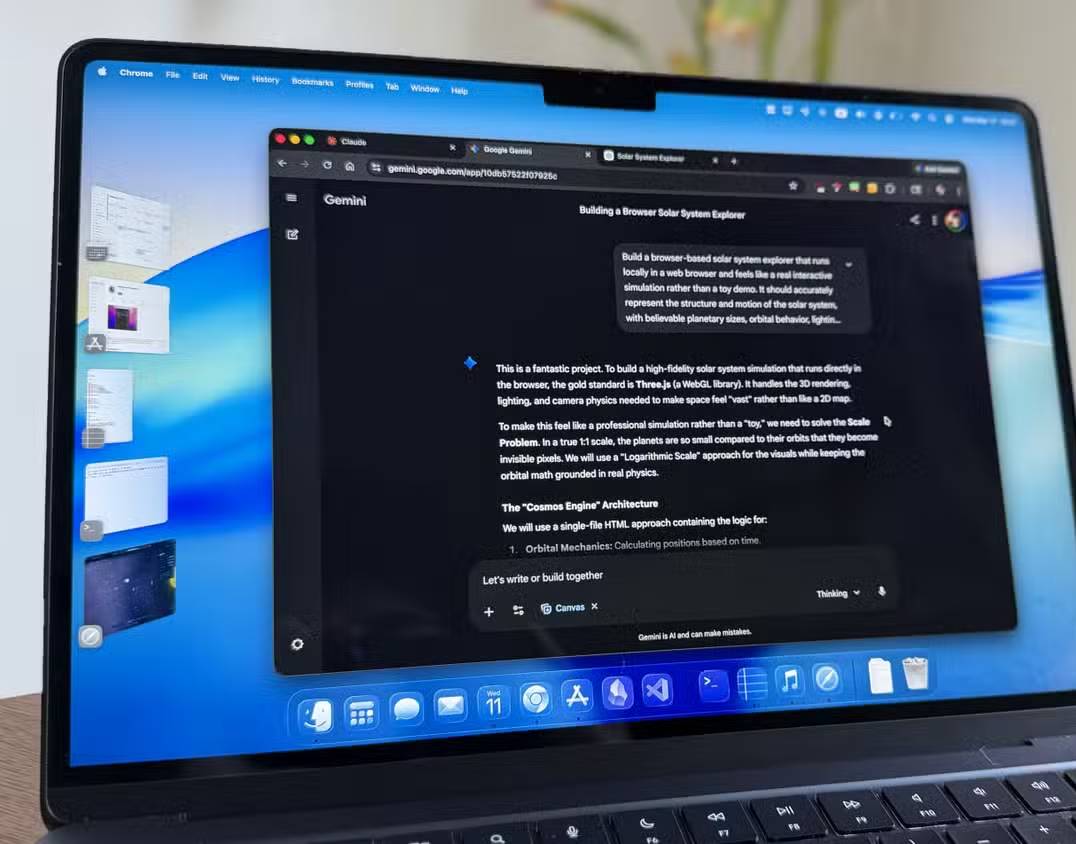

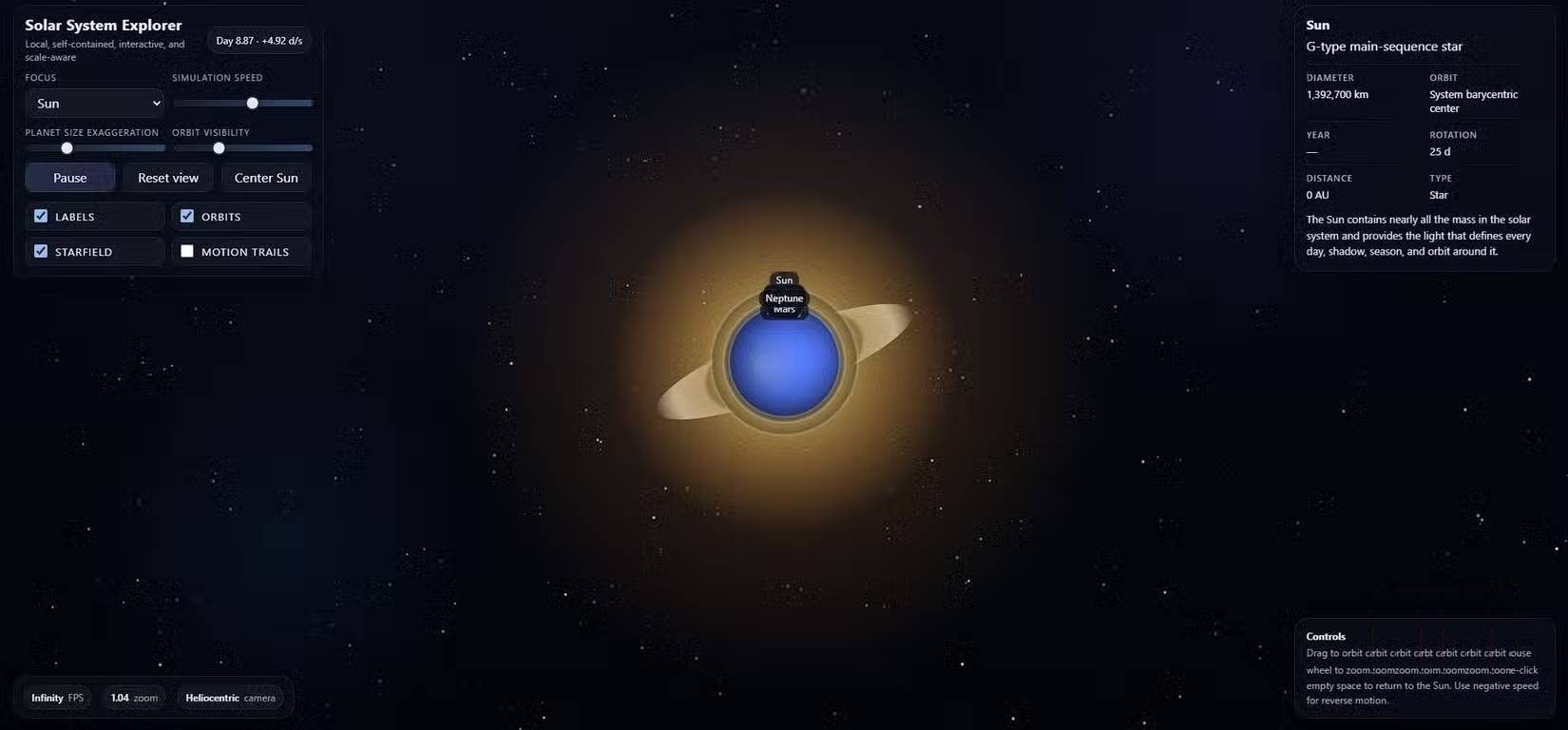

Gemini runs fast and looks quite impressive.

Gemini 3 Thinking finished first, and its speed was no less impressive. It was also the only model that didn't display the results as a canvas-style interface, but that was just a minor point.

Gemini chose Three.js, and from a distance, it looks pretty good. The planets orbit the sun, the lighting looks very realistic, and there are shadows too. In one of the screenshots, you can see the planets obscuring each other, which is an interesting detail and instantly makes the whole image more vibrant. At the very least, it can function as a really nice Wallpaper Engine background.

Problems arise when zooming in. The on-screen message says "click on the planets to focus," but clicking doesn't actually do anything. And clicking on a moving planet in the first place is more annoying than necessary. The planets' surface textures are another weakness – just plain color bands, no real surface detail, and no way to tell which planets are rotating. Selecting a planet from the menu triggered a rather nice-looking camera chase effect, but it came with a bug: Once locked onto an object, you couldn't zoom out. You had to refresh the page to fix it. The feature is interesting, but its implementation is flawed.

View Gemini's products on the Vercel website.

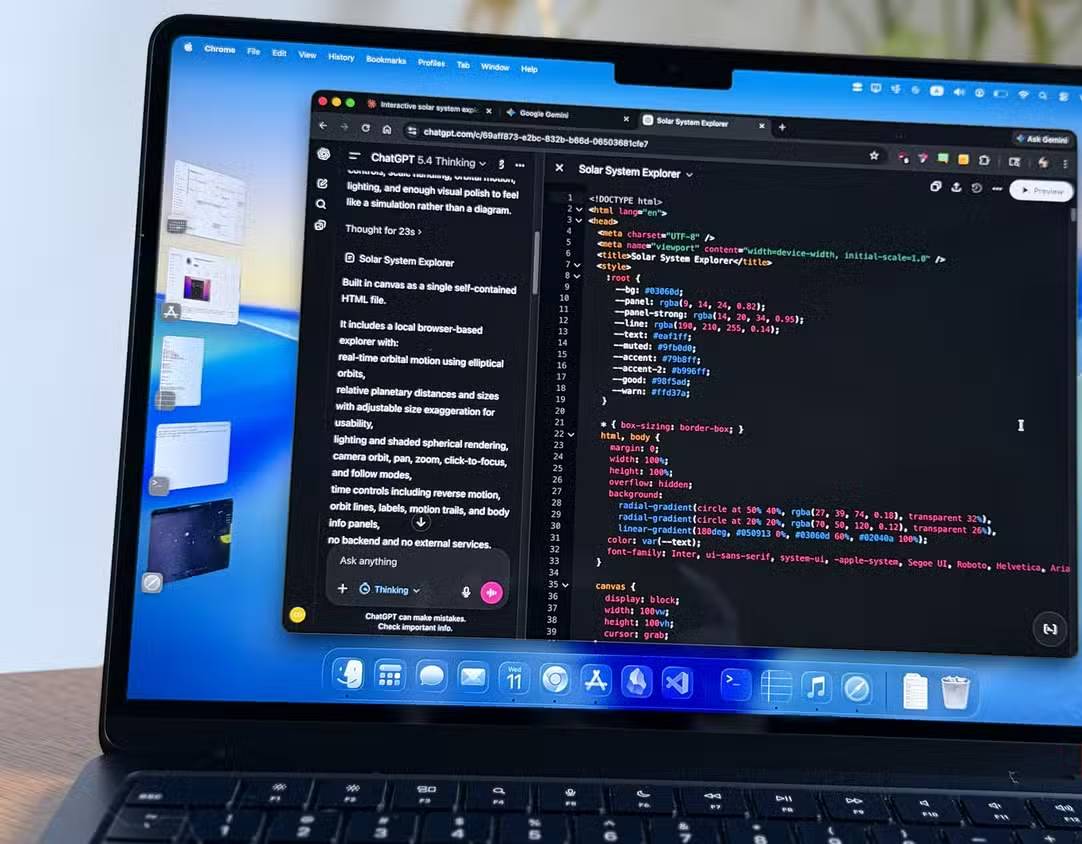

ChatGPT thought about it for a long time and very carefully, but the result was still bad.

ChatGPT is the final completed program. The author of this article used ChatGPT 5.4 Thinking, and it took quite a while before generating the code. Unfortunately, the result was seriously flawed from the start. All the planets are stacked on top of each other at the same position as the sun – no orbits, no distances, no rotational action, just a pile of spheres stacked on top of each other at the origin.

Since the initial requirement was no patching and no retesting, this is the result that will be evaluated. That's exactly the purpose of the test. If an agent relies on this model to generate working code without adding a prompt, this is what you will get.

Interestingly, when ChatGPT was asked to review its own code and identify the problem, it listed about a dozen potential issues and completely missed the real problem. Upon closer manual inspection, the reason turned out to be simple, and almost entirely human-induced: The simulation stores orbital distances in AU (astronomical units), but the renderer expects kilometers. So, when the program intended to place Mercury 0.5 AU from the Sun, it placed Mercury 0.5 km – essentially inside the Sun.

It's worth noting that ChatGPT also completely ignores Three.js and uses a top-down 2D interface. The interface has more controls than Gemini and looks quite polished, but all of that is irrelevant when the emulation software itself doesn't work.

View the ChatGPT results on the Vercel website.

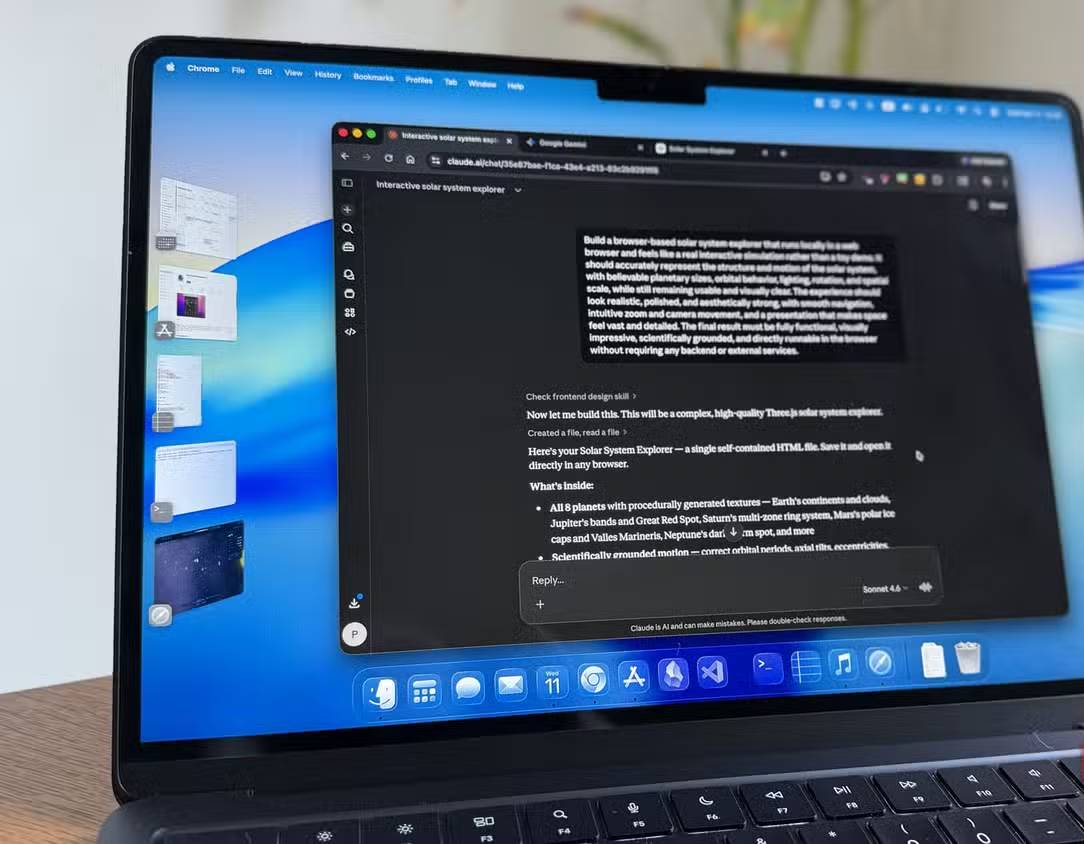

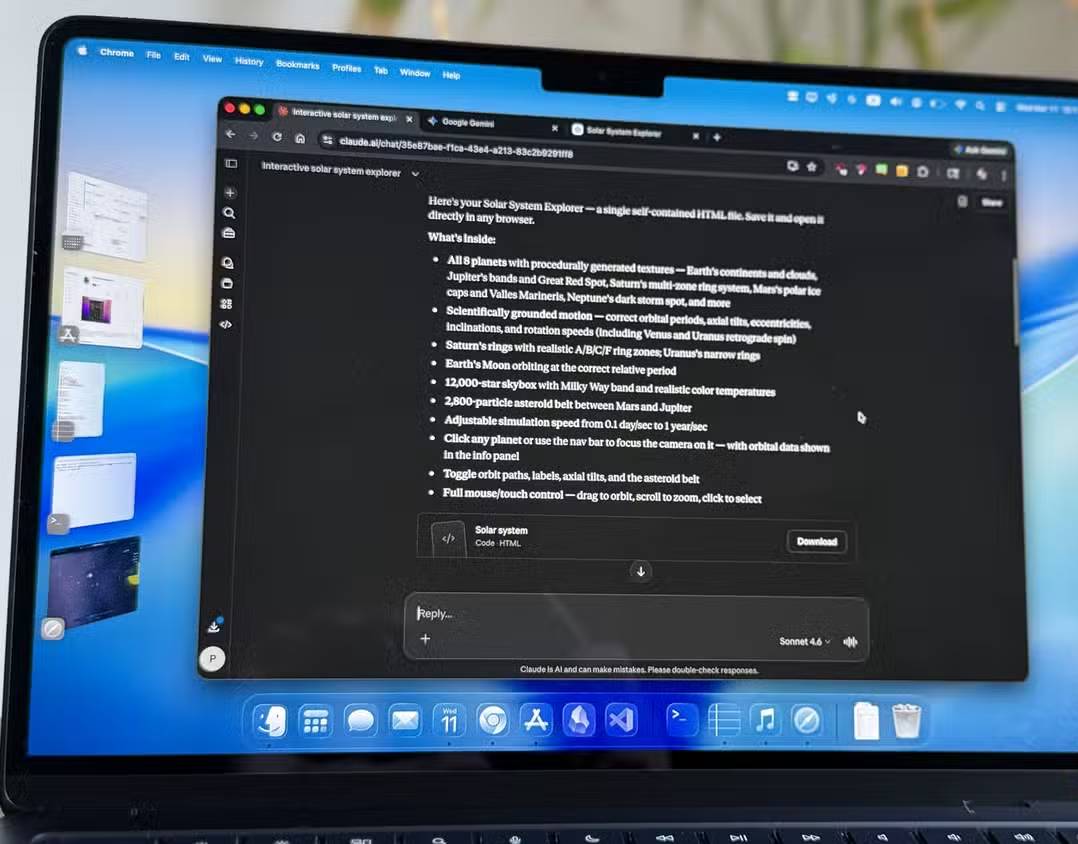

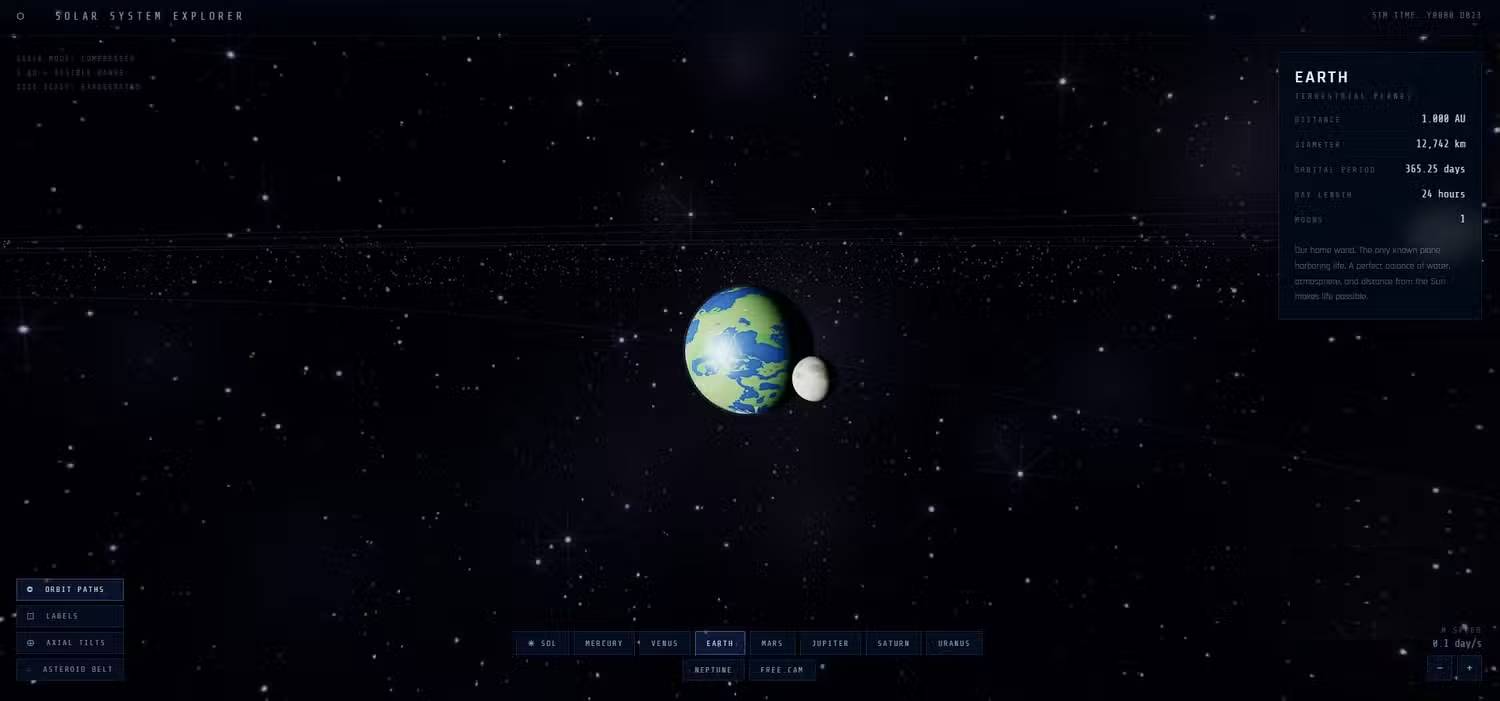

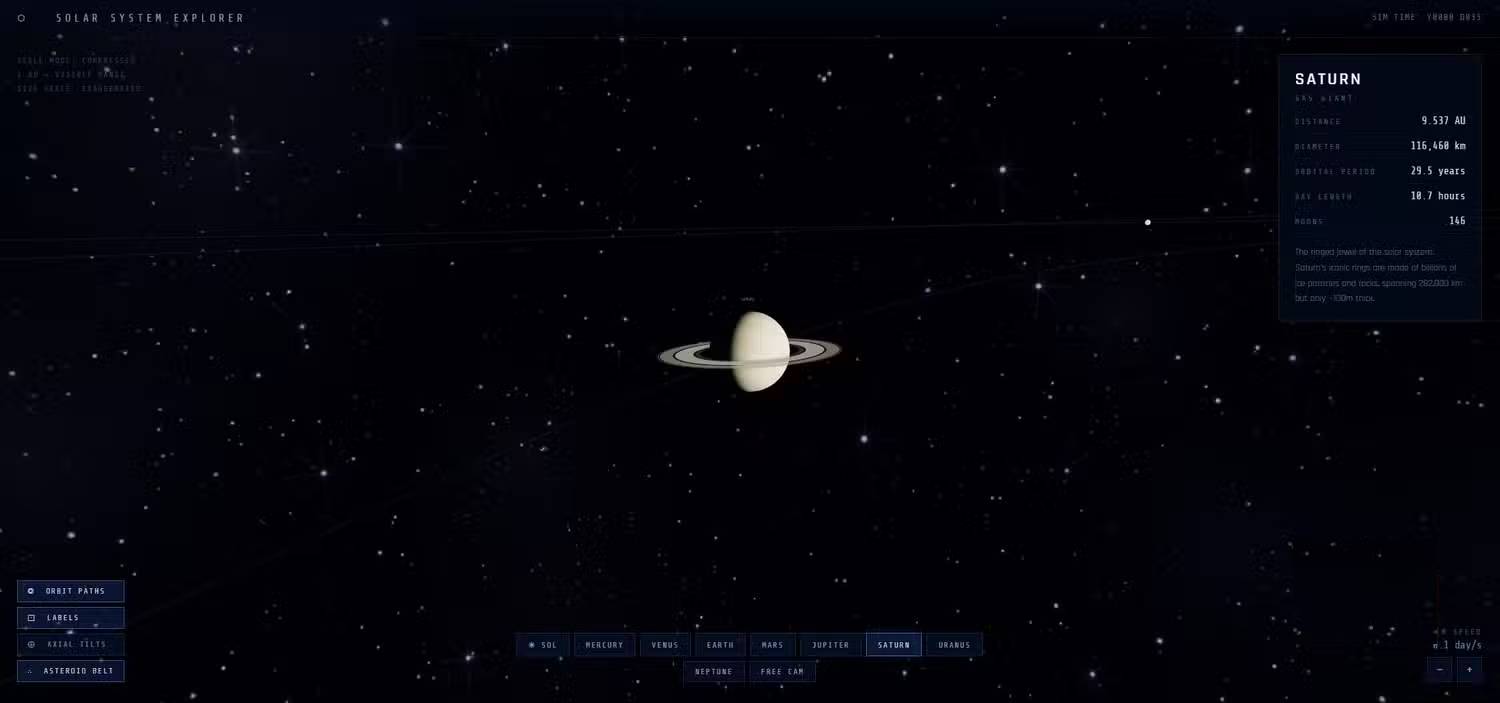

Claude is on a completely different level.

The only product that delivers a feeling of perfection.

Claude was released after Gemini but long before ChatGPT. The author used Claude Sonnet 4.6 , the latest free version. The quality gap between Claude's product and other products is very clear.

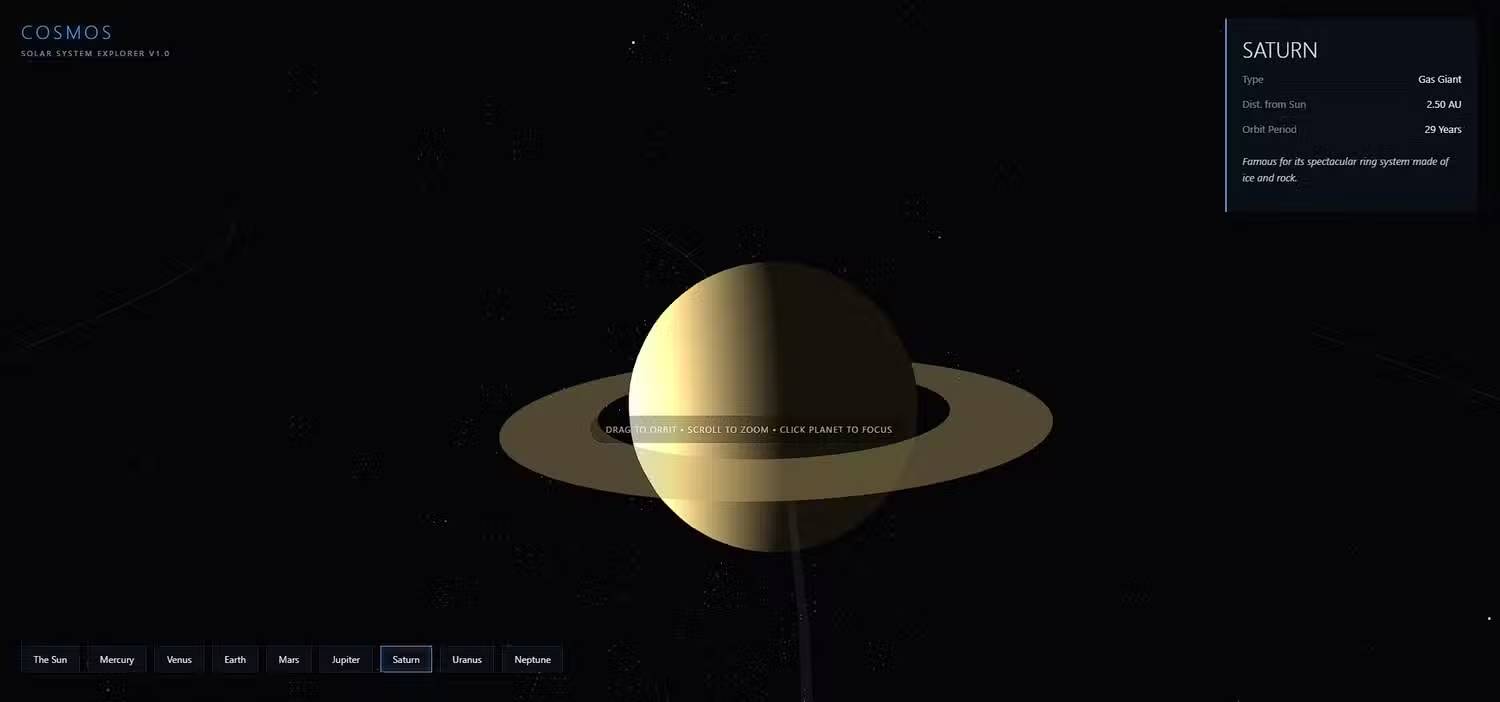

Like Gemini, it also uses Three.js, but implements it in a much more thorough way. The first thing that strikes you is that it includes the asteroid belt. Gemini didn't even bother with that. Then there's the textures! Claude's planets look incredibly realistic. Earth looks very realistic and recognizable. Saturn, despite the limitations of a single HTML file, looks surprisingly close to reality.

This final point deserves emphasis. This is still a standalone file. Normally, you would expect external texture maps or image assets to be loaded separately. But Claude has generated all of these textures automatically using JavaScript , right within the file, and it's done a great job.

The planets are arranged more realistically, they rotate at relatively reasonable speeds, and their orbits around the sun are much closer to reality. There is a speed control knob, with a default speed of one day per second, along with toggle switches for orbit lines, asteroid belts, and other visual elements.

Claude simply went further than the other options. Better graphics, a better user experience, and the whole product felt more polished both in form and function. Most importantly, it felt like a real product, not just a fun draft. And it achieved that with just one request and one try.

See Claude's results on the Vercel website.

- Compare Claude 3.5 Sonnet, ChatGPT 4o and Gemini 1.5 Pro

- Anthropic adds 'Memory' feature to Claude AI, helping to remember and maintain conversation context like ChatGPT

- 4 ways AI Claude chatbot outperforms ChatGPT

- The difference between Claude and ChatGPT

- The difference between Gemini and ChatGPT

- Comparing prices for ChatGPT, Gemini, Claude, Grok…: Which AI package should you choose?

- Why Claude is the Super Smart AI Alternative to ChatGPT That's Becoming Obsolete

- Claude launches powerful file editor: surpassing ChatGPT and Gemini

- What is Claude Pro? How does Claude Pro compare to ChatGPT Plus?

- Claude offers its AI memo feature for free, directly competing with ChatGPT.

- Claude officially allows free data import from ChatGPT.

- How do I create a 3D simulation experiment using Gemini?

- Why can't ChatGPT and Claude see the time?

- Using AI to ask about health: 4 tips to help you get more accurate answers.