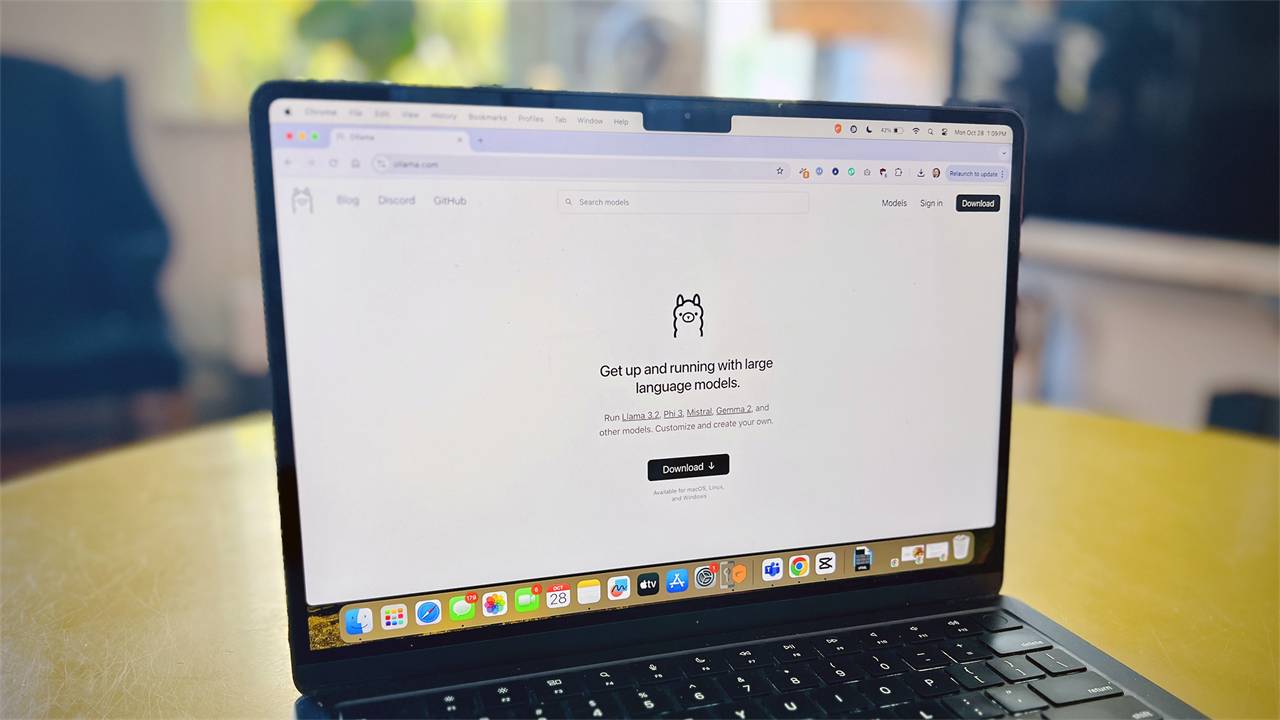

What is Ollama?

Ollama stands for Omni-Layer Learning Language Acquisition Model. Essentially, Ollama is a groundbreaking platform that democratizes access to large-scale language models (LLMs) by allowing users to run them locally on their computers. Developed with a vision to empower individuals and organizations, Ollama provides a user-friendly interface and allows access to multiple models through a single point of contact.

Key features of the Ollama framework

- Local execution : One of Ollama's standout features is its ability to run LLM locally, mitigating privacy concerns associated with cloud-based solutions. By delivering AI models directly to users' devices, Ollama ensures better data control and security while providing faster processing speeds and reducing reliance on external servers.

- Extensive model library : Ollama provides access to a vast library of pre-trained LLMs, including popular models like Llama 3. Users can choose from a range of models tailored to different tasks, domains, and hardware capabilities, ensuring flexibility and versatility in their AI projects.

- Seamless integration : Ollama integrates seamlessly with a wide range of tools, frameworks, and programming languages, making it easy for developers to integrate LLM into their workflows.

- Customization and Refinement : With Ollama, users have the ability to customize and refine LLMs to suit their specific needs and preferences. From rapid engineering to minimal learning patterns and fine-tuning processes, Ollama empowers users to shape the behavior and output of their LLMs, ensuring they align with desired goals.

Support for pre-trained models in Ollama

Ollama allows developers to run pre-trained, locally weighted, open-source language and multimodal models through a unified runtime and API. This eliminates the need to train models from scratch while reducing infrastructure complexity and computational costs, enabling rapid integration into applications.

- LLaMA 2 : A large, versatile language model suitable for text generation, inference, and instruction following tasks.

- Mistral : A high-performance model optimized for efficiency and robust reasoning capabilities.

- Gemma : A lightweight, guided model designed for conversational and task-oriented use cases.

- LLaVA : A multimodal model that combines linguistic and visual comprehension capabilities for visual recognition interactions.

Comparing Ollama to cloud-based LLMs

Here are the key differences between Ollama and other cloud-based LLMs:

| Criteria | Ollama (Local LLM) | Cloud-based LLM |

|---|---|---|

| Deployment model | Runs locally on a user's computer or a self-managed server. | Hosted and managed by third-party providers. |

| Data security | Data never leaves the local environment. | Data is transmitted to external servers. |

| Latency | Very low (no round-trip transmission time) | Network dependent; varies by region and load. |

| Cost structure | One-time hardware cost; no fee per token. | Payment per usage (tokens, requests, subscriptions) |

| Scalability | Limited by local hardware. | The potential for expansion is virtually limitless, offering great flexibility. |

| Diverse models | These are mostly open-source models (LLaMA, Mistral, Qwen, etc.). | Proprietary model + open-source model, often more advanced. |

Applications of Ollama

- Creative writing and content creation : Writers and content creators can leverage Ollama to overcome creative block, brainstorm content, and create diverse and engaging content across various genres and formats.

- Code creation and support : Developers can leverage Ollama's capabilities to create, explain, debug, and document code, optimizing their development workflow and improving code quality.

- Language translation and localization : Ollama's ability to understand and create languages makes it an invaluable tool for translation, localization, and multilingual communication, facilitating cross-cultural understanding and global collaboration.

Limitations of Ollama

- Hardware dependency : Maximum model performance and size are strictly limited by the local CPU/GPU, RAM, and VRAM, making large models slow or impractical on a typical user's computer.

- Scalability limitations : Ollama is optimized for local use and testing, not for distributed inference workloads, production-scale work, or high concurrency.

- Limitations of the modeling ecosystem : Limited access to supported open-source models, lack of availability of advanced or proprietary models, and slower adoption of the latest research releases.