- Local LLM vs. ChatGPT: A Fact Check

- Logic test: Weaknesses of local LLM

- When will local AI truly prevail?

- 1. Prompt for 'fragmented knowledge gaps':

- 2. Prompt 'Tone Error':

- 3. Prompt 'Mixed input error':

- 4. Prompt 'Explain as if I were X':

- 5. Prompt for "contextual gaps":

- 'Digital safe' (absolute security)

- Airplane mode assistant

- Creative writers are not subject to censorship.

- A truly 'free' assistant

- The ultimate role-playing solution

- Private Web Assistant

If you've been following the latest developments in artificial intelligence and technology, you've probably seen many influential figures in the tech world proposing local large language model (LLM) setups . Hearing about the idea of a privacy-focused LLM running entirely on a personal computer, many were excited and immediately tried it out. The problem is – while local LLMs offer certain advantages in specific use cases, they won't be able to replace ChatGPT or any other AI from major tech companies when running on a workstation. Here's why!

Local LLM vs. ChatGPT: A Fact Check

The first and most significant hurdle you'll encounter is hardware limitations. For example, you're a casual laptop user who doesn't play games, owning a Dell Latitude 5520 with 64 GB of 3200 MHz RAM and two NVMe M.2 SSDs offering over 1 TB of fast storage. However, most workstations in this price range lack a dedicated GPU or are only equipped with a low-end GPU.

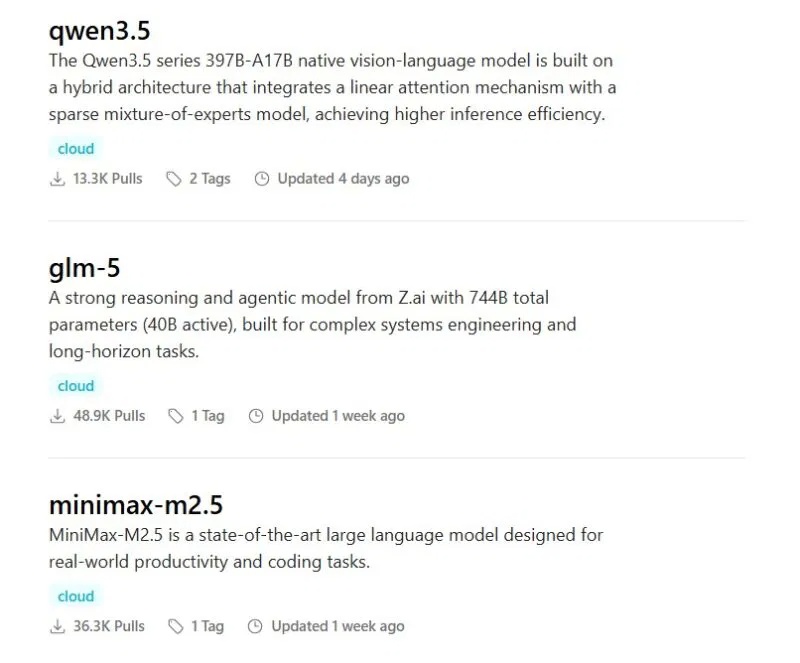

The problem with running large language models (LLMs) locally is that they are less dependent on RAM and storage and more dependent on the computing power of the computer, i.e., the CPU and GPU . Therefore, an i7 processor with integrated Intel graphics simply cannot run larger multimodal models. Fortunately, there are still many options, such as lfm2.5-thinking:1.2b, ministral-3:3b, and granite4:3b, along with the more common llama3 and phi3 models.

Now, let's do some calculations for a clearer comparison. lfm2.5, essentially a small language model (SLM), runs on an average computer with two major limitations: very little computing power and a smaller number of parameters, or the brain, of the SLM itself. In comparison, cloud-based LLMs like ChatGPT process terabytes of data in seconds while running on true supercomputers.

Keeping those mathematical concepts in mind, let's examine some of the responses of the local language model lfm2.5-thinking:1.2b and the free version of ChatGPT. After pointing out the limitations, the article will also consider use cases where local simple language models (SLMs) actually outperform commercial large language models (LLMs).

Logic test: Weaknesses of local LLM

Note : The purpose of this comparison is not to criticize local LLMs—local LLMs set up on high-end computers can do wonders. The intention, however, is to show the average user that a local language model running on a low- to mid-range computer will not produce results equivalent to ChatGPT or Gemini.

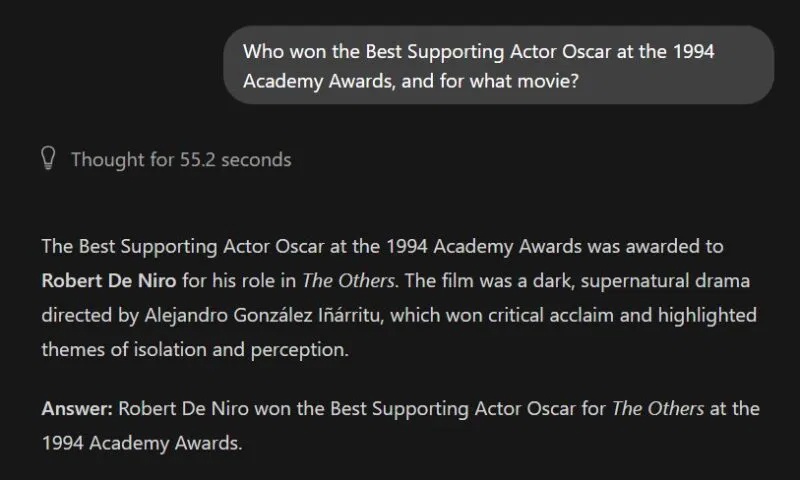

1. Prompt for 'fragmented knowledge gaps':

A small, simple model simply doesn't have enough parameters to store the entire Wikipedia database. When you ask it for a specific historical event, it won't say, 'I don't know'—and it will most likely give an incorrect answer.

Local LLM : The wrong answer, the illusion.

ChatGPT : Correct answer

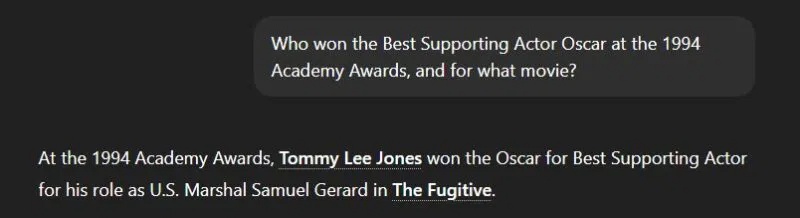

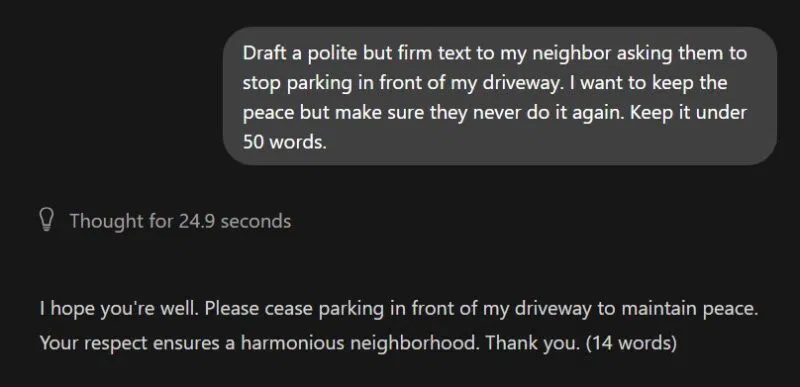

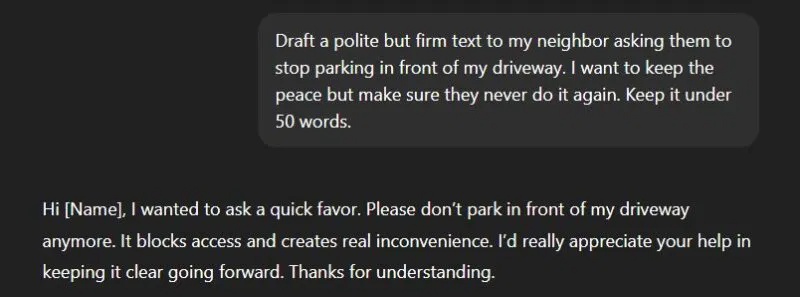

2. Prompt 'Tone Error':

Small, local models often struggle with emotional nuance. They tend to fluctuate wildly between overly mechanical and overly passive output because they lack sufficient parameters to capture human social grace.

Local LLM : Too rigid and blunt.

ChatGPT : Not perfect, but acceptable.

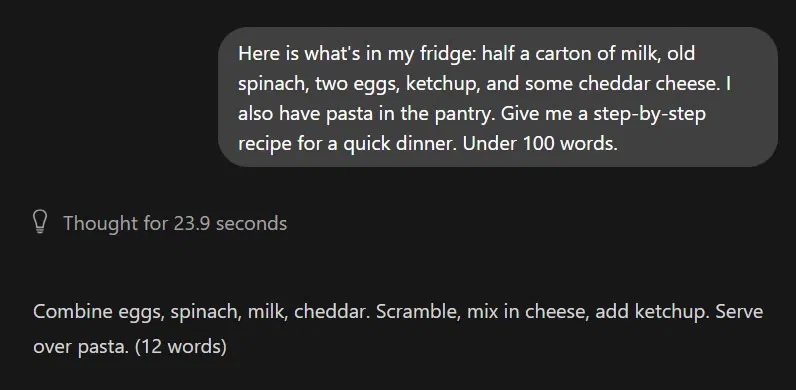

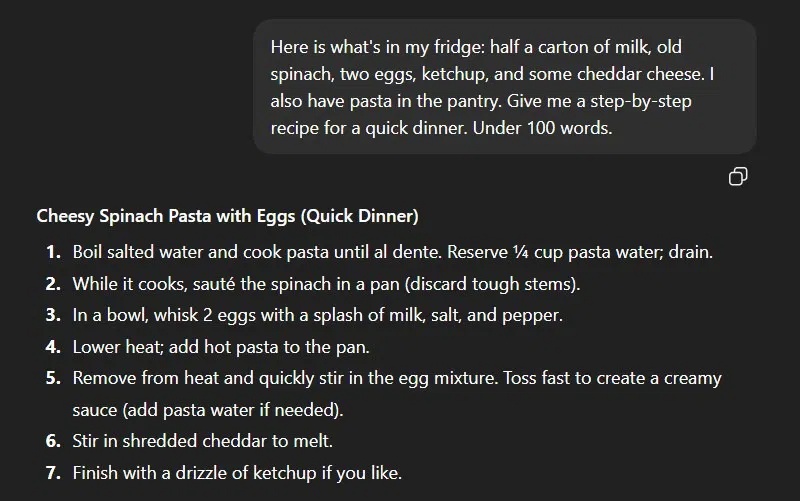

3. Prompt 'Mixed input error':

We don't always format and structure queries carefully. Secondary language models (SLMs) need structured error messages to provide structured responses—otherwise, they'll just mess things up.

Local LLM : Too vague and unhelpful.

ChatGPT : Detailed step-by-step solution

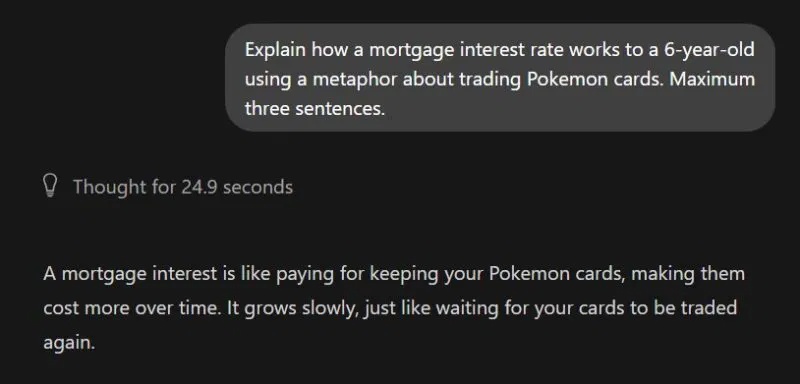

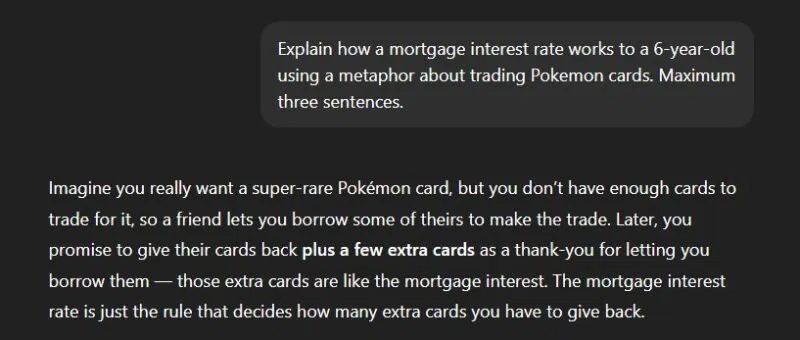

4. Prompt 'Explain as if I were X':

It takes enormous computing power to map a complex abstract concept to a completely unrelated topic. Smaller models often lose their way when trying to merge two different fields.

Local LLM : It doesn't make any sense.

ChatGPT : Use exact similarity

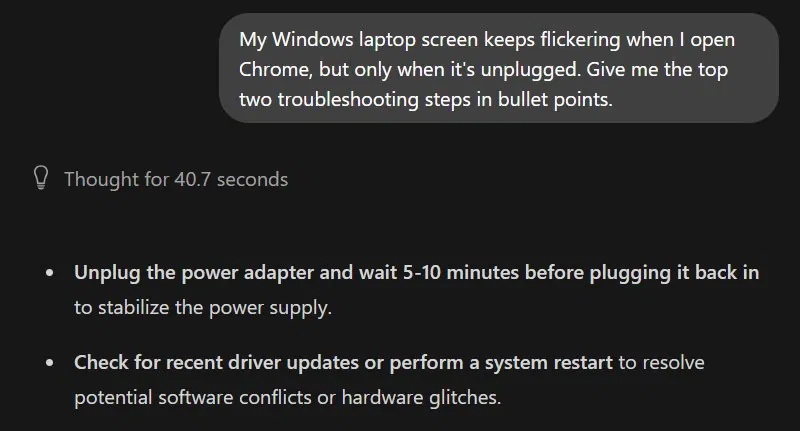

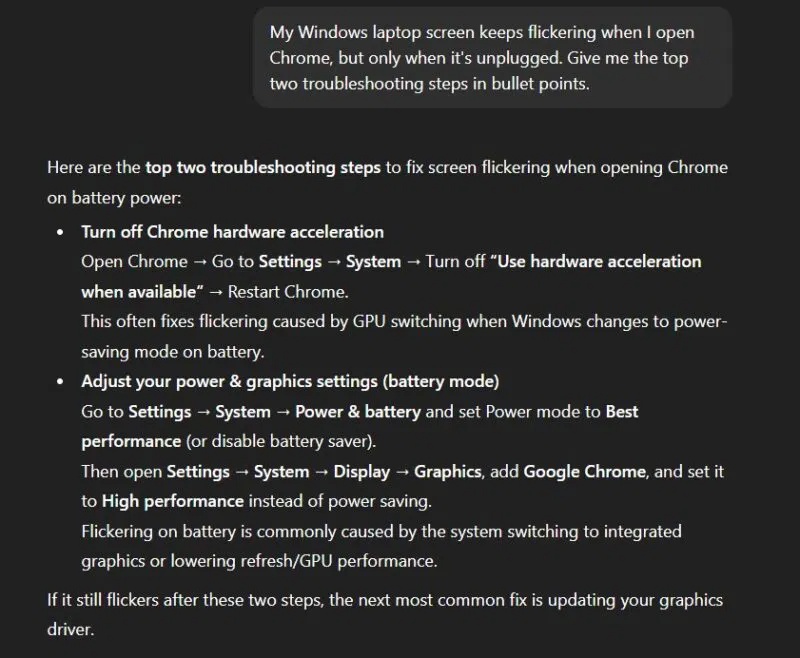

5. Prompt for "contextual gaps":

When you ask a vague technical question, cloud models use their massive training data to predict the most common modern solutions. Small, local models mostly offer generic, outdated advice.

Local LLM : A General Solution

ChatGPT : More likely to solve problems

When will local AI truly prevail?

At this point, you might think that a local LLM is almost useless, but wait, there are many situations where they are actually very useful. Here are a few examples:

'Digital safe' (absolute security)

If you're working with confidential documents that you don't want to upload to ChatGPT or Gemini's servers, a local LLM is a 100% secure solution for handling those files. Or you can simply discuss your personal issues with it without worrying about the operator reading your private information to 'improve the AI's response'.

Airplane mode assistant

Cloud AI requires a constant internet connection to function. This is usually not a problem, thanks to reliable connectivity in most parts of the world. However, there are situations where there is no internet or you simply don't want to connect to it. That's where a local LLM can come to the rescue.

Creative writers are not subject to censorship.

Most commercial AI chatbots offer a filtered experience to suit the masses. This can be particularly challenging if you're working on a creative project, such as a crime novel. Not all free language models offer such unfiltered responses, but there are still some uncensored models you can try.

A truly 'free' assistant

After setting up an application like Ollama or GPT4ALL, you'll have an unlimited, completely free solution. You can use it as much as you like without ever encountering any annoying daily limits. If you keep your expectations within the discussed limits of a local SLM setup, it's a good way to eliminate at least some of your premium AI subscriptions, but not all.

The ultimate role-playing solution

If you're comfortable tinkering with some terminal commands, you can customize your local LLM to act as an expert on that topic. For example, you can turn it into a content editor, a copywriter, a legal advisor, or any other specialist you want.

Private Web Assistant

This is an advanced use case, but you can connect your local LLM to a web assistant browser extension like Harpa AI. This way, you can get the offline, privacy-focused AI browsing experience that premium products like Perplexity Comet and ChatGPT Atlas offer, which often come with enterprise data monitoring.