Running AI models locally has become a popular idea, especially for privacy-focused use cases and edge computing . But most examples assume powerful hardware. This project explores a more limited question: Can a Raspberry Pi practically run a local AI language model and maintain stability in the process?

Instead of focusing on performance, the goal is to understand the limitations—memory, storage, temperature—and see how well a single-board computer can handle modern AI tasks.

Why try running a local AI model on a Raspberry Pi?

The Raspberry Pi is not designed for AI inference. It has limited RAM, no dedicated GPU, and relies on SD cards for storage. In theory, this makes it an unsuitable candidate for running language models.

At the same time, that's precisely what makes it interesting. If AI can run here—even slowly—it will open the door to offline assistants, edge automation, and educational experimentation. The value lies not in speed, but in insight.

Initial efforts and practical verification

The initial efforts were unstable. Models would load but fail mid-inference, containers would exit without apparent error, and the system would sometimes hang completely. Initially, it seemed as though the hardware simply couldn't handle the workload.

The turning point came from stepping back and viewing this as a system issue, not an AI problem . The errors weren't random—they were symptoms of resource depletion occurring silently in the background.

Choose a model that matches your hardware.

One of the most important lessons learned is that choosing the right model is more important than choosing the right software. Large, popular models consistently fail on the Raspberry Pi due to memory constraints. Through testing, smaller models like the TinyLlama proved far more practical for the Pi 4 and Pi 5 boards.

The response is slower and simpler, but more stable – and stability is the real goal on this platform.

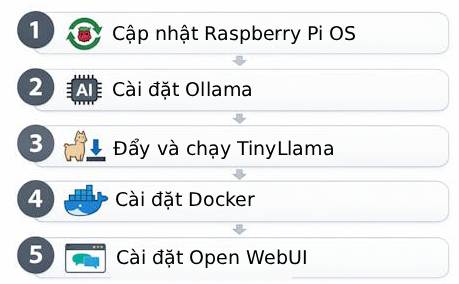

Deployment using Docker, Ollama, and Open WebUI

Docker is used to keep the setup clean and repeatable. Ollama handles model management, while Open WebUI provides an easy-to-use interface. This combination works well, but Docker introduces its own overheads, requiring careful tweaking on systems with low RAM.

Without a memory and storage strategy, using containers can actually make things worse on small devices.

The really important issues

Three problems kept arising throughout the construction process.

Storage capacity limitations cause recurring errors when using small SD cards. AI models and containers consume more space than expected, and insufficient storage leads to unpredictable behavior.

Memory crashes are the most common type of error. Processes are constantly being terminated by the system without clear notification until the swap configuration is correctly implemented.

Performance degradation due to overheating becomes apparent during longer inference runs. Passive cooling is insufficient. Without a proper cooling system, performance drops sharply and system stability is compromised.

Solving these problems doesn't require complex tools – but it does require understanding how the Raspberry Pi performs under continuous load.

Final result

After addressing these limitations, the Raspberry Pi was able to reliably run the local language model. Response times were slow, and this setup wasn't intended to replace cloud-based AI. However, it was stable, educational, and surprisingly capable for experimentation.

More importantly, it provides a clear view of how AI tasks interact with real-world hardware limitations.

This article focuses on the reasons and lessons learned rather than listing every command and configuration. The complete step-by-step setup process, including the exact Docker commands, swap configuration, storage recommendations, and thermal troubleshooting solutions, is documented separately.