Whether you're building an application using a simple LLM API, a RAG system, or complex AI agents, there's always one crucial question: how can the system scale effectively? Specifically, how will costs and latency change as the number of requests increases?

This issue becomes even more critical with complex AI agents, where a single user query can lead to multiple different LLM calls. In practice, many LLM requests often contain the same input tokens. Users frequently ask similar questions, system prompts repeat in each request, and even when generating a response, the model has to process many of the same tokens again.

Therefore, caching has become a crucial solution for optimizing costs and latency. According to OpenAI documentation, Prompt Caching can reduce latency by up to 80% and reduce token input costs by up to 90%.

What is caching?

Caching is not a new concept in computer science. Essentially, a cache is a component that temporarily stores data to serve recurring requests more quickly.

There are two main states of caching:

- Cache hit : data is found in the cache, allowing for fast retrieval at a low cost.

- Cache miss : data is not in the cache; the system has to retrieve it from the source, which takes more time and resources.

A familiar example is browser caching. When accessing a website for the first time, the browser must load data from the server (cache miss). The data is then stored in the cache. Upon revisiting the site, the browser can load from the cache (cache hit), allowing the webpage to load faster and saving resources.

Caching is particularly effective in systems with repeatedly accessed data. In fact, many systems follow the Pareto principle, meaning that approximately 80% of requests typically focus on 20% of the data. This allows caching to be effective without requiring excessive storage space.

How does Prompt Caching work?

To understand Prompt Caching, it's necessary to understand how LLM generates responses. The LLM inference process typically consists of two stages:

Pre-fill

At this stage, the model processes the entire prompt to generate the first token. This is a computationally intensive phase.

decoding

Subsequently, the token creation model follows an autoregressive pattern, meaning each new token depends on the previous ones.

For example, using prompt:

"What should I cook for dinner?"The model can generate feedback in stages, such as:

Here Here are Here are 5 Here are 5 easy Here are 5 easy dinner ideasThe problem is that every time a new token is created, the model has to reprocess all the previous tokens, which wastes resources.

To solve this problem, LLM uses KV caching . Intermediate tensors are saved to avoid recalculating from scratch. However, KV caching only works within a single prompt.

Prompt Caching extends this mechanism to multiple different prompts.

Prompt Caching in practice

Prompt Caching saves the repeated parts of the prompt, typically:

- System prompt

- fixed instructions

- context from RAG

When a new request has the same prefix, the model will reuse previous calculations instead of processing it from scratch. This significantly reduces costs and latency.

The key point is that caching works at the token level . This means that if two prompts have the same prefix, that part can be cached.

For example:

Prompt 1 What should I cook for dinner?Prompt 2 What should I cook for lunch?In this case, the "What should I cook" section will be cached.

However:

Prompt 1 Dinner time! What should I cook?Prompt 2 Lunch time! What should I cook?These two prompts will not be cached because they have different prefixes.

Therefore, an important principle is:

- Fixed information is placed at the top of the prompt.

- Change information is placed at the bottom of the prompt.

Prompt Caching in Modern APIs

Currently, many models like GPT or Claude support Prompt Caching directly in the API. The cache is typically shared among users within the same organization using a common API key.

This is especially useful in enterprise environments, where many users use the same application and the same prefix.

Some common caching options include:

- In-memory cache: maintains cache for 5–60 minutes

- Extended cache: retains cache for up to 24 hours (depending on the model)

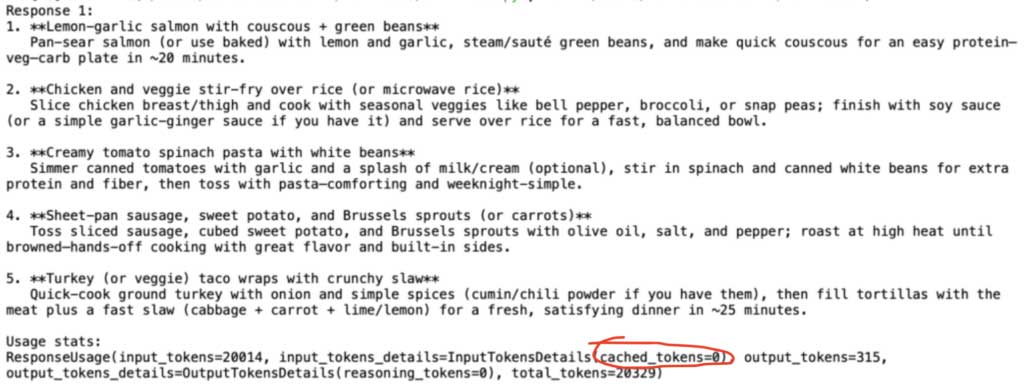

We can see all of this in practice with the following minimal Python example, making requests to the OpenAI API, using Prompt Caching and the previously mentioned cooking prompts. I've added a rather large generic prefix to my prompts to make the caching effect even more apparent:

from openai import OpenAI api_key = "your_api_key" client = OpenAI(api_key=api_key) prefix = """ You are a helpful cooking assistant. Your task is to suggest simple, practical dinner ideas for busy people. Follow these guidelines carefully when generating suggestions: General cooking rules: - Meals should take less than 30 minutes to prepare. - Ingredients should be easy to find in a regular supermarket. - Recipes should avoid overly complex techniques. - Prefer balanced meals including vegetables, protein, and carbohydrates. Formatting rules: - Always return a numbered list. - Provide 5 suggestions. - Each suggestion should include a short explanation. Ingredient guidelines: - Prefer seasonal vegetables. - Avoid exotic ingredients. - Assume the user has basic pantry staples such as olive oil, salt, pepper, garlic, onions, and pasta. Cooking philosophy: - Favor simple home cooking. - Avoid restaurant-level complexity. - Focus on meals that people realistically cook on weeknights. Example meal styles: - pasta dishes - rice bowls - stir fry - roasted vegetables with protein - simple soups - wraps and sandwiches - sheet pan meals Diet considerations: - Default to healthy meals. - Avoid deep frying. - Prefer balanced macronutrients. Additional instructions: - Keep explanations concise. - Avoid repeating the same ingredients in every suggestion. - Provide variety across the meal suggestions. """ * 80 # huge prefix to make sure i get the 1000 something token threshold for activating prompt caching prompt1 = prefix + "What should I cook for dinner?"

And then comes prompt 2:

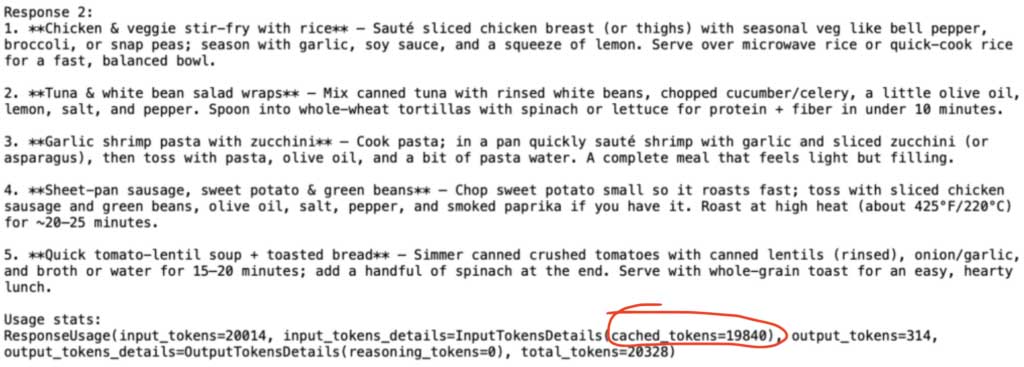

prompt2 = prefix + "What should I cook for lunch?" response2 = client.responses.create( model="gpt-5.2", input=prompt2 ) print("nResponse 2:") print(response2.output_text) print("nUsage stats:") print(response2.usage)

Therefore, for prompt #2, we will only be charged the full price for the remainder, which is not exactly the same as that prompt. This will be the input tokens minus the cached tokens: 20,014 – 19,840 = only 174 tokens, or in other words, less than 99% of the tokens we were charged the full price for. For the remaining cached tokens, we receive an extremely high discount (up to 90% off).

In any case, since OpenAI imposes a minimum threshold of 1,024 tokens to enable the prompt caching feature and the cache can be retained for up to 24 hours, it's clear that those cost benefits are only achievable in practice when running AI applications at large scale, with many active users making numerous requests daily. However, as explained for such cases, the Prompt Caching feature can deliver significant cost and time benefits to LLM-powered applications.

- Total tokens: 20,014

- Token cache: 19,840

- Tokens charged in full: 174

This is equivalent to a 99% reduction in the full fee token .

However, OpenAI requires a minimum of 1,024 tokens to enable Prompt Caching and a maximum cache of 24 hours. Therefore, the greatest benefits appear when the system is large-scale and has many requests.

Summary

Prompt caching is a powerful optimization technique that reduces the cost and latency of LLM systems. By reusing repetitive prompts, the system can avoid redundant computations and improve performance.

As AI applications expand, especially in RAG or multi-agent systems, prompt caching will become a crucial factor in ensuring long-term scalability and cost optimization.