Deep Learning (DL)

The Deep Learning revolution began around 2010. Since then, Deep Learning has solved many seemingly impossible problems. The Deep Learning revolution did not begin with a single discovery.

The Deep Learning revolution began around 2010.

Since then, Deep Learning has solved many "impossible" problems.

The Deep Learning revolution didn't begin with a single discovery. It happened when several essential elements were in place:

- The computer is fast enough.

- The computer's memory is large enough.

- Better training methods were invented.

- Better fine-tuning methods were invented.

Neuron

Scientists agree that our brains have between 80 and 100 billion neurons.

These neurons have hundreds of billions of connections with each other.

Neurons (also known as nerve cells) are the basic units of our brain and nervous system.

Neurons are responsible for receiving input from the outside world, sending output (commands to our muscles), and converting electrical signals in between.

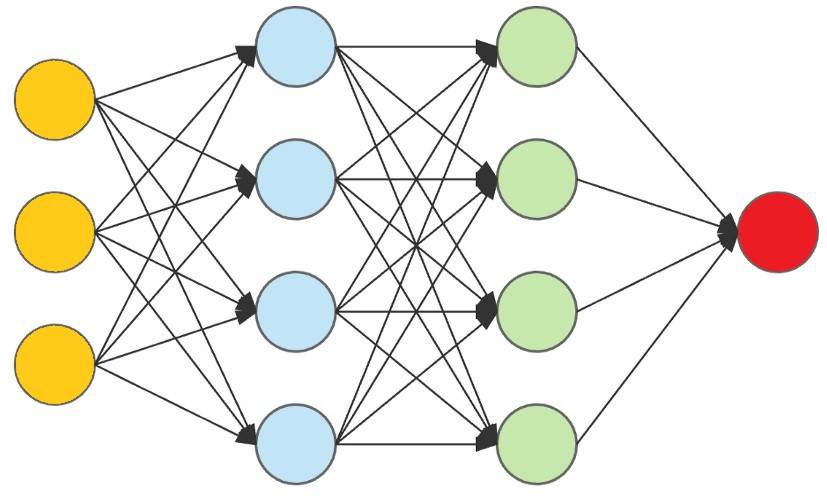

Artificial neural networks

Artificial neural networks are often referred to as Neural Networks (NNs).

Artificial neural networks are essentially multilayer perceptrons.

The perceptron defines the first step in multilayer artificial neural networks.

Artificial neural networks are at the core of Deep Learning.

Artificial neural networks are one of the most important inventions in history.

Artificial neural networks can solve problems that algorithms CANNOT solve:

- Medical diagnosis

- Face detection

- Speech recognition

Artificial neural network model

The input data (yellow) is processed through a hidden layer (blue) and modified through another hidden layer (green) to produce the final output (red).

Tom Mitchell

Tom Michael Mitchell (born 1951) is an American computer scientist and undergraduate professor at Carnegie Mellon University (CMU). He is the former Chair of Computer Science at CMU.

"A computer program is said to learn from experience E for a class of tasks T and a measure of performance P, if its performance in tasks belonging to T, as measured by P, improves with experience E."

Tom Mitchell (1999)

- E: Experience (number of times).

- T: Task (driving).

- P: Performance (good or bad).

The story of Giraffe

In 2015, Matthew Lai, a student at Imperial College London, created an artificial neural network called Giraffe.

Giraffes can be trained in 72 hours to play chess at the same level as an international grandmaster.

Computers that play chess aren't new, but the way these programs are created is novel.

Intelligent chess-playing programs take years to build, while Giraffe was built in 72 hours using an artificial neural network.

Deep Learning

Classical programming uses programs (algorithms) to produce results:

Traditional computers

Data + Computer algorithm = Result

Machine learning uses the results to create programs (algorithms):

Line Learning

Data + Results = Computer Algorithm

Line Learning

Machine learning is often considered the equivalent of artificial intelligence.

This is incorrect. Machine Learning is a subfield of Artificial Intelligence.

Machine learning is a field of artificial intelligence that uses data to teach machines.

"Machine learning is a field of study that gives computers the ability to learn without programming."

Arthur Samuel (1959)

The formula for smart decision-making

- Save the results of all actions.

- Simulate all possible outcomes.

- Compare the new actions with the old actions.

- Test whether the new action is good or bad.

- Choose the new action if it's less bad.

- Repeat the entire process.

The fact that computers have been able to do this millions of times proves that they can make very intelligent decisions.

- AI engineer Facebook talks about deep learning, new programming languages and hardware for artificial intelligence

- Deep Learning - new cybersecurity tool?

- Google researchers for gaming AI to improve enhanced learning ability

- What is Deep Learning? Applications in life

- This robot only takes 2 hours to learn to walk by itself

- 5 free AI books that every AI engineer should read.

- MIT AI model can capture the relationship between objects with the minimum amount of training data

- Nvidia launches Deep Learning Super Sampling 2.0, an advanced platform for rendering AI-based graphics

- What is Deep Web? Where is the Deep Web? Good or bad?

- How to search safely on deep web?

- Microsoft announced DeepSpeed, a new deep learning library that can support the training of super-large scale AI models

- AI uses WiFi data to estimate the number of people in a room

- Admire Nvidia's new AI application: Turn MS Paint-style doodle into an artistic 'masterpiece'

- A guide to the Deep Web for newbies