How to stop allowing the use of personal data to train AI.

Most popular AI tools offer options that allow you to decide whether or not you want your conversations and content used to train the model.

Frankly: It's not pleasant to know that anything you've ever written may have been used to train large language models (LLMs) . There's nothing we can do to change that. Copyright law is skewed toward AI companies because, until now, the responsibility has been on the user to "refuse" to have their personal data, conversations, and creative work used to train AI models. Refusal won't remove the work from the dataset already used to train AI models, but it can prevent it in the future.

Fortunately, most popular AI tools offer options that allow you to decide whether or not you want your conversations and content used to train the model.

The three most popular tools are: ChatGPT, Gemini, and Claude.

The three most popular tools currently available are ChatGPT , Gemini, and Claude . Fortunately, the opt-out option for all three isn't hidden beneath a plethora of settings and submenus.

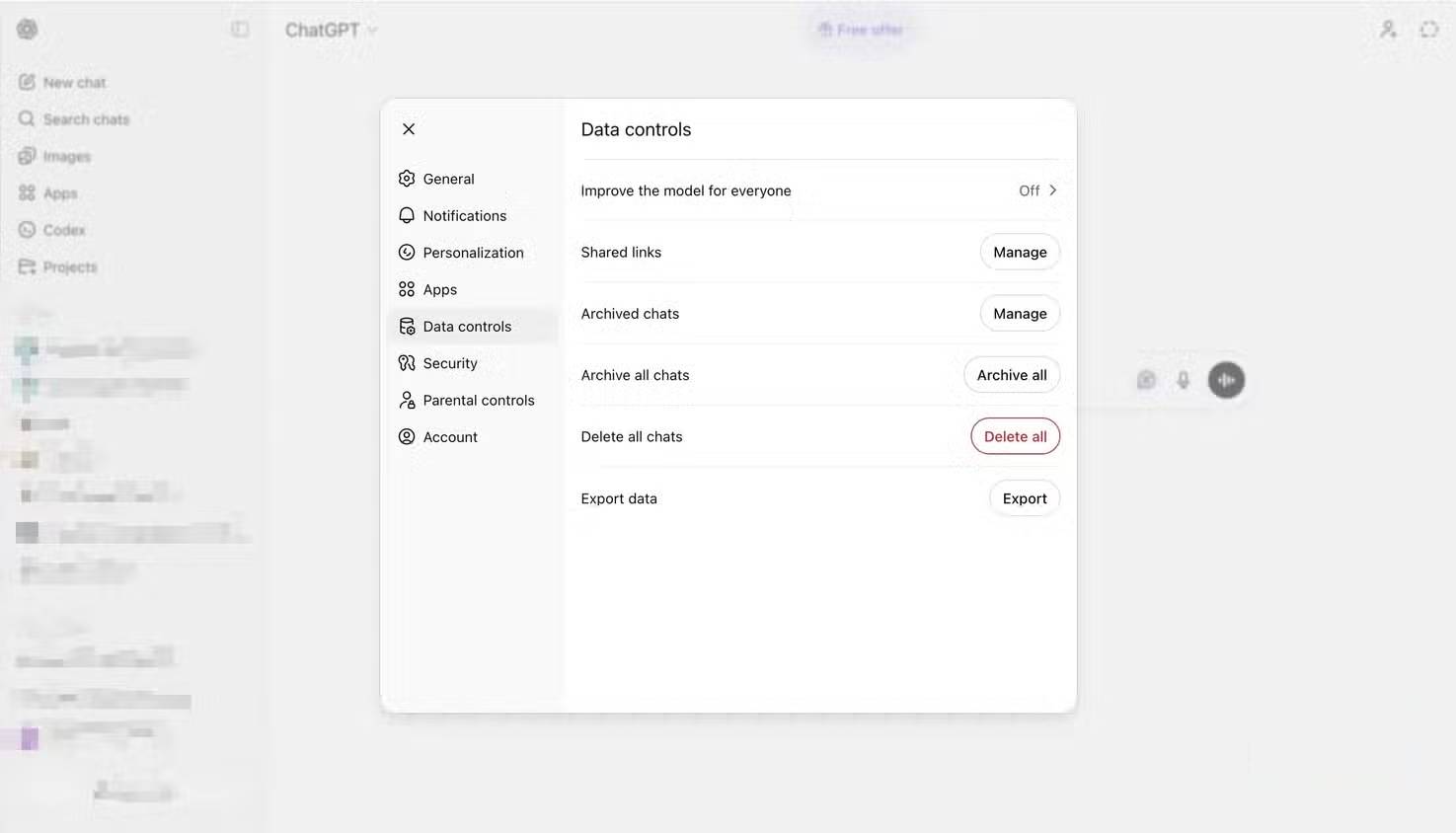

On ChatGPT, click your profile picture, then select Settings -> Data Controls . Turn off the "Improve the model for everyone " option to prevent future conversations from being used for training. You can also use Temporary Chats, which doesn't store data, or use conversations for training. However, there are a few caveats. For example, if you provide feedback on a conversation using like/dislike icons, the entire conversation may be used for training, even if you've turned this setting off in Data Controls. Additionally, ChatGPT temporarily stores each conversation to monitor for abusive behavior and ensure safety.

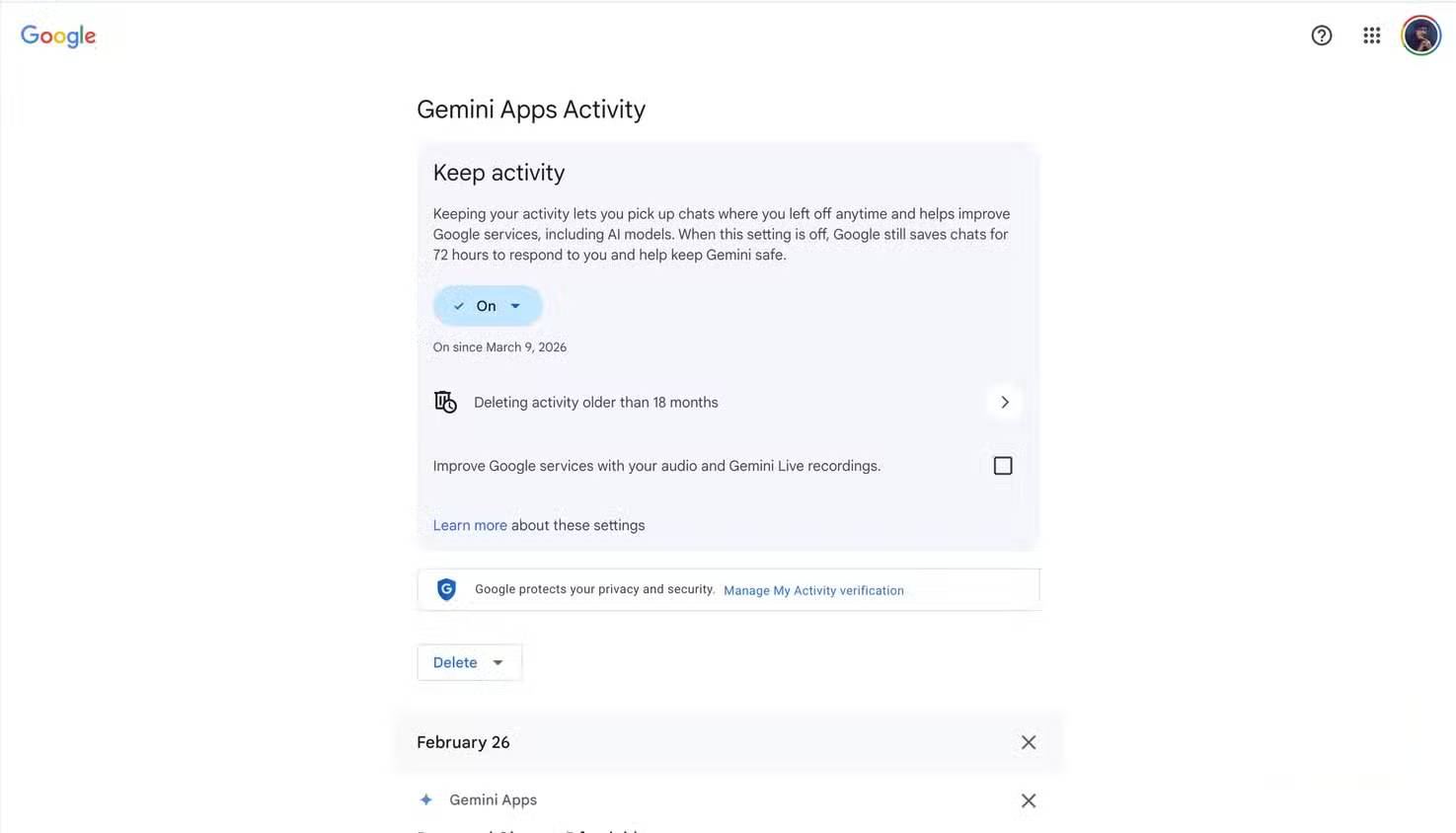

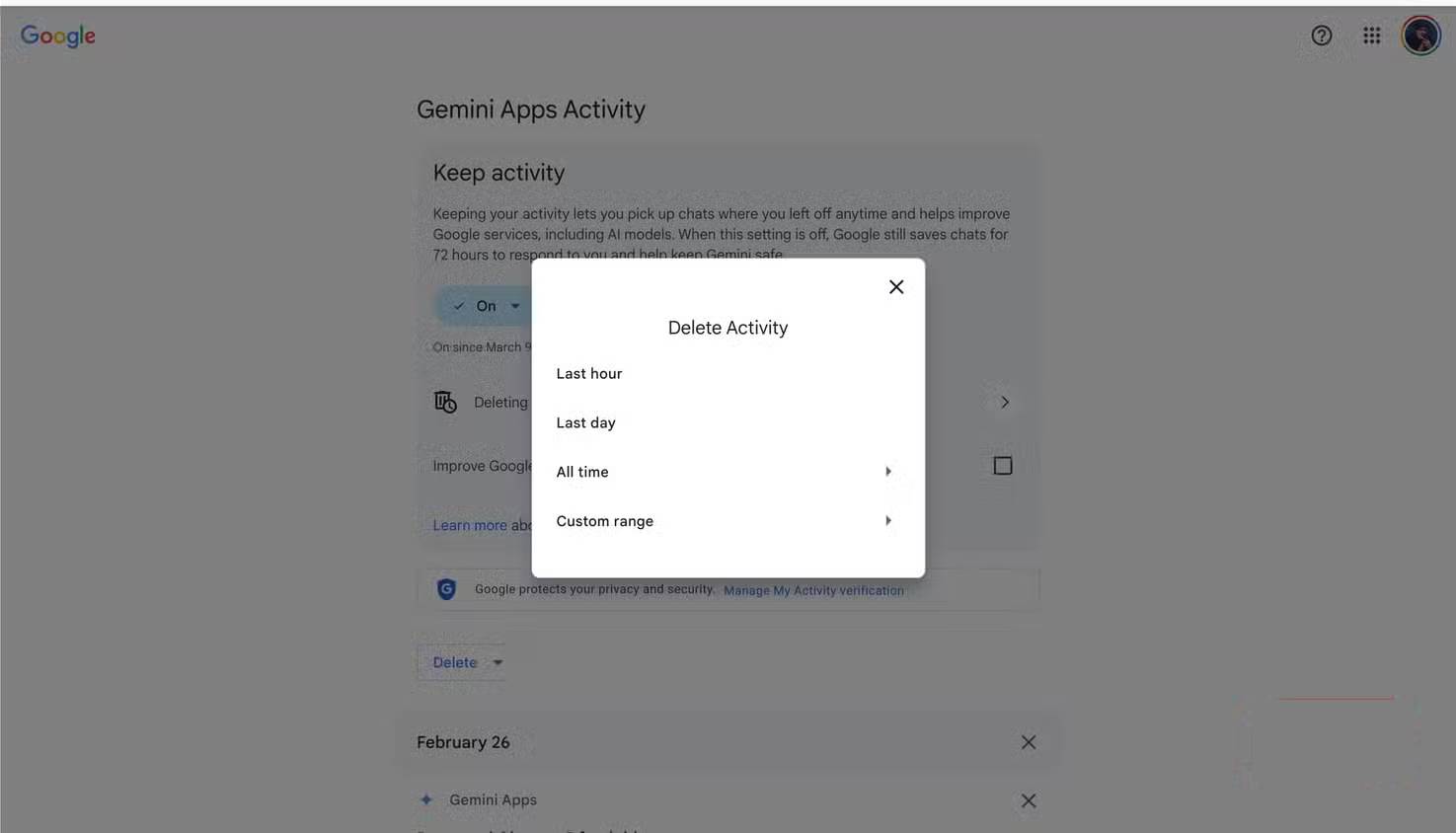

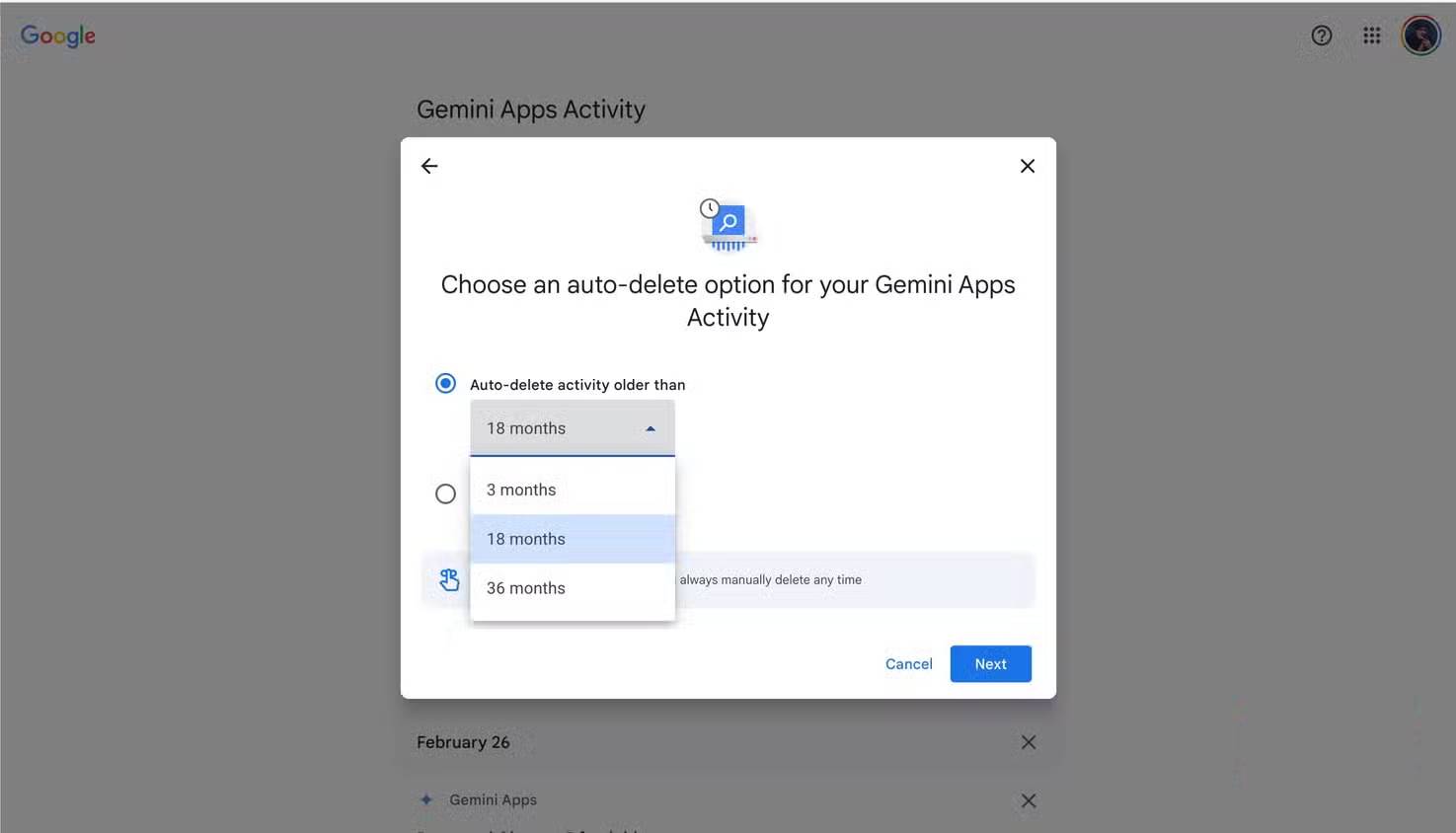

If you're using Gemini, opting out is simple: Go to Settings and help -> Activity , then click Turn Off in the Keep Activity drop-down menu . Once enabled, Gemini will save new conversations for up to 72 hours, but they won't be used for training. You can also delete old activity using the Delete Activity option on the same page. However, this won't affect previous conversations that have been marked for manual review – data from those conversations can be retained for up to 3 years, according to the Gemini app's Privacy Hub .

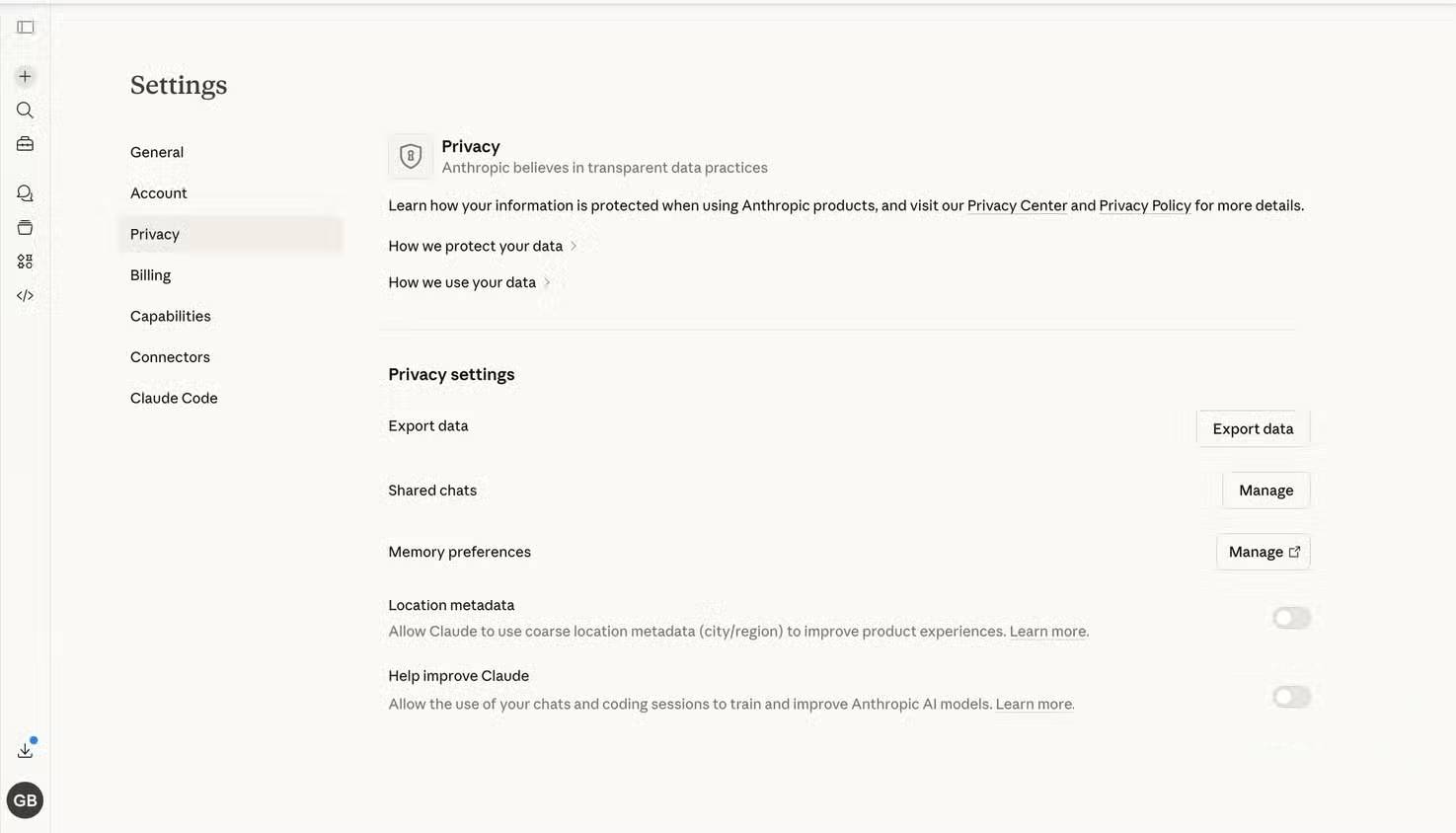

Claude uses your conversations and programming sessions to improve its model. To disable this feature, click on your profile picture, then go to Settings -> Privacy and turn off the "Help improve Claude for everyone" option . Unlike ChatGPT and Gemini, once you opt out of improving Claude, your past information will not be used to improve the model. Also, if you want a specific conversation not to be used for model training, simply delete it. Remember, if you provide feedback on an answer, the entire conversation will be saved and used to improve the model, even if you've turned off the " Help improve Claude for everyone" option .

Creative tools also use your work.

Ensure your original designs are not used to train the AI.

Software design providers, Adobe and Figma, use your data and content to improve their AI models.

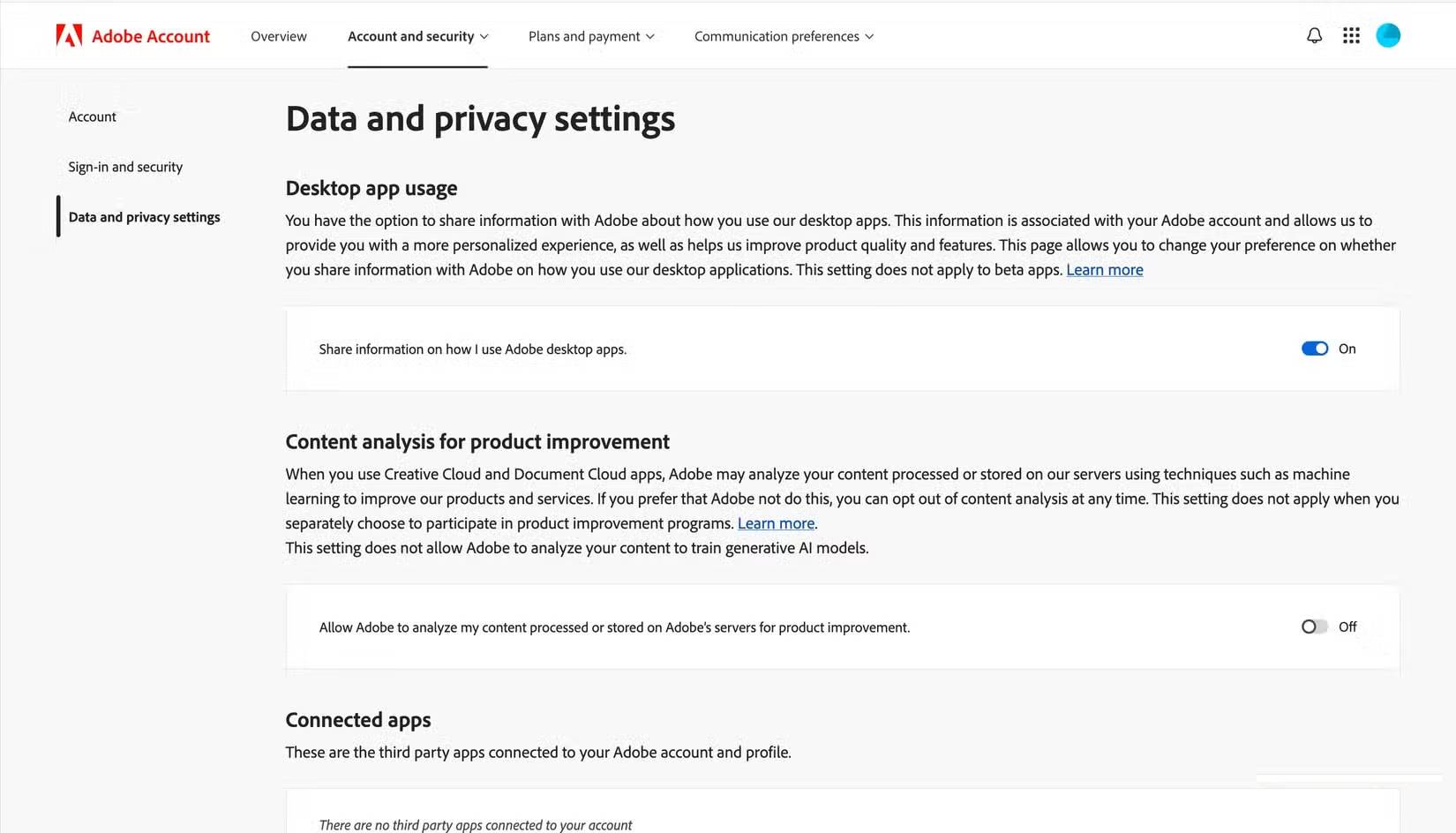

In the case of Adobe, any content uploaded to the Adobe Stock marketplace is used to train AI. Files stored on Adobe Creative Cloud may be analyzed to improve Adobe software, but they will not be used in the model training process. This does not apply to files stored locally. So, if you don't want Adobe to use your work to train AI, don't upload it to the Adobe Stock marketplace. If you want to opt out of content analysis, you can do so by visiting Adobe's privacy policy page and turning off " Content analysis for product improvement ".

Figma uses your content and usage data to improve its AI features. Content data includes text, images, comments, annotations, layer names, layer attributes, etc., that you have created or uploaded to Figma. Usage data, on the other hand, relates to how you use and access Figma. To opt out of this, go to the AI tab on the Figma Settings page and turn off Content improvement .

Social media platforms are training AI using your posts.

LinkedIn allows you to turn this feature off, but Meta needs to do better.

AI companies aren't the only ones using your data to train their models. LinkedIn and Meta are also using your posts and other information to improve their AI models.

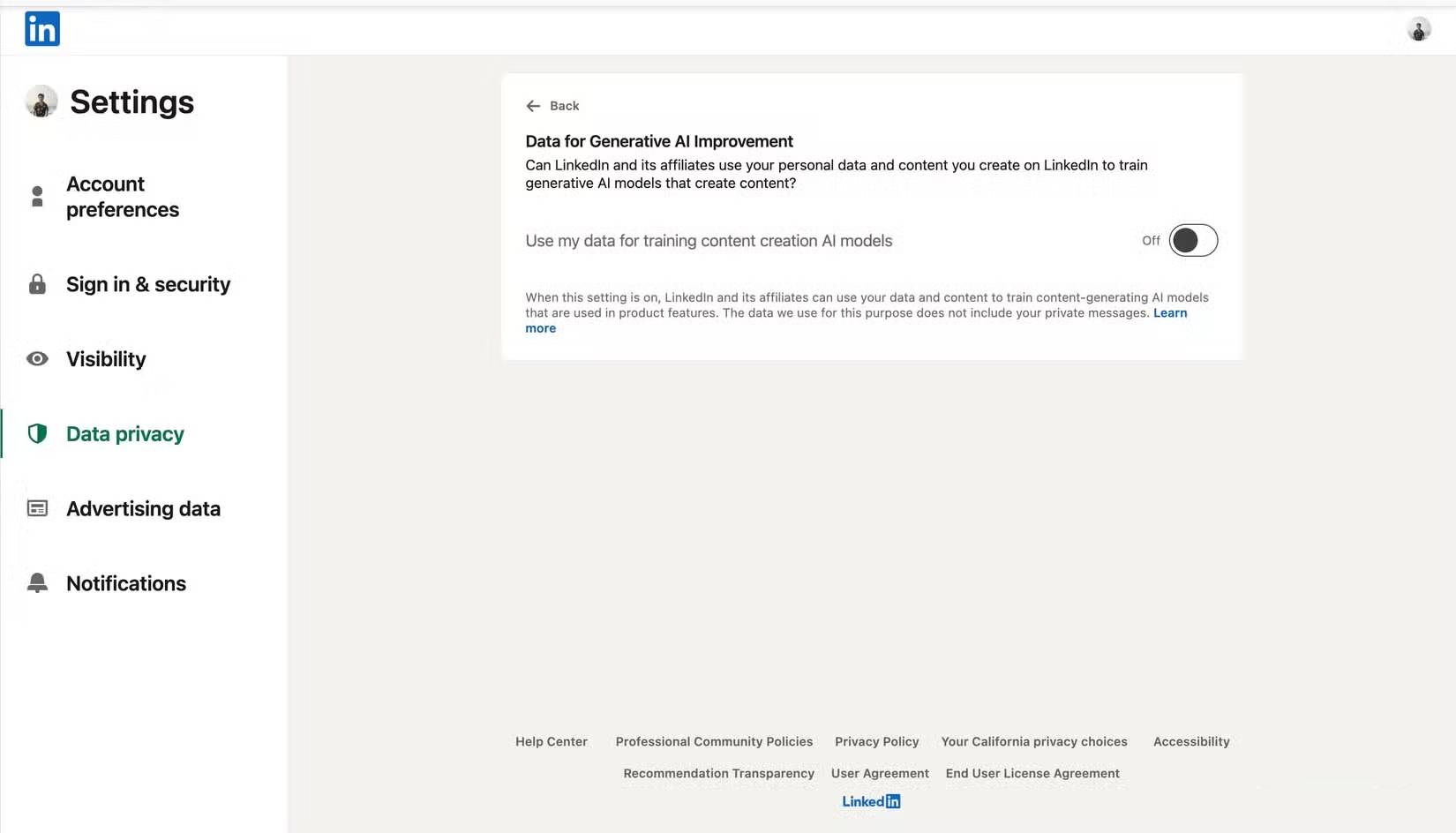

LinkedIn data—such as posts and images—is used to train their AI models for content creation, but not for AI-based personalization, safety, and anti-abuse models. You can opt out by turning off the Data for Generative AI improvement feature in the Data Privacy tab under Settings . This only applies to future posts and content.

Meta is the worst culprit when it comes to controlling your data to train AI. All your public posts and interactions on Facebook, Instagram, and Threads are used to train Meta AI , and there's virtually nothing you can do to stop it. You can submit an objection form—requiring a disturbing amount of personal information—to request that your data not be used to train Meta AI, but acceptance depends on your country's data protection laws.

Most AI platforms automatically exclude business user data from the model training process. This should also apply to regular users.

- Backup personal data on Facebook, Twitter and Google+

- How to turn off Facebook Platform to stop sharing personal data

- Warning: There are several websites selling fake train tickets for Tet

- Why is your data worthwhile?

- Instructions on how to order 2018 Tet train tickets online

- Google will allow users to automatically delete location tracking data

- How to prevent dizziness and nausea when reading books on the train

- How to protect your personal data on Android and iOS Phones?

- OpenAI transcribes millions of hours of YouTube videos to train GPT-4

- How to Take Your Bike on the Train

- Why are train wheels more like a cone than a regular circle?

- Latest Edward the Man-Eating Train Codes and How to Enter

- Medical record data - a lucrative hacker hack in 2019

- Self-selling Facebook data for charity, high prices, but not yet to celebrate this young man was whistled