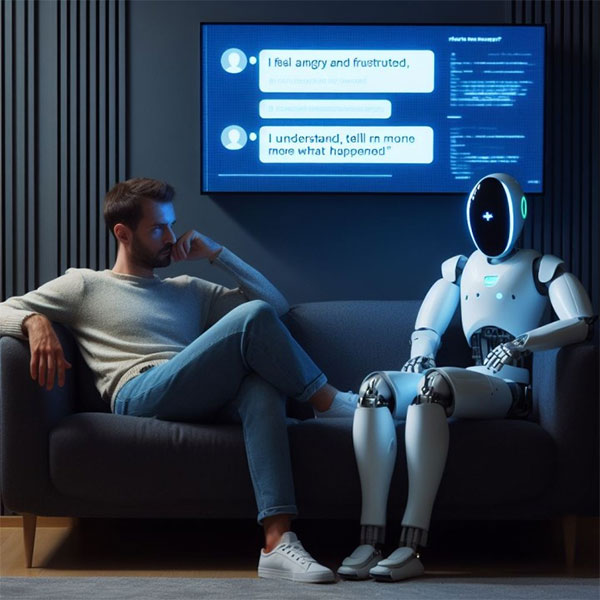

Among the countless chatbots and AI avatars available today, you can find all sorts of 'characters' to chat with: fortune tellers, stylists, favorite fictional characters… and even bots that claim to be therapists, psychologists, or simply 'listeners'.

AI chatbots are increasingly claiming to support mental health. But experts warn that choosing this approach carries significant risks.

Large-scale language models are trained on massive amounts of data but operate based on probability, making them sometimes unpredictable. In just a few years, these tools have become commonplace. Simultaneously, controversial cases have emerged where chatbots have encouraged self-harm, suicide, or even suggested that former addicts return to substance use.

The problem is that many chatbots are designed to 'empathize' and keep users engaged in conversation, rather than to improve their mental health. And for the average user, it's difficult to distinguish between a tool that truly adheres to therapeutic standards and one that's simply a system capable of engaging in conversation.

A research team from the University of Minnesota Twin Cities, Stanford University, the University of Texas, and Carnegie Mellon University tested chatbots as 'therapists.' The results revealed a number of shortcomings in how they provide 'care.' Stevie Chancellor, an assistant professor at Minnesota and co-author of the study, stated that these chatbots are not a safe replacement for professional therapists and do not meet high-quality therapy standards.

Why are chatbots 'impersonating' therapists a cause for concern?

Psychologists and consumer protection organizations have warned regulators that chatbots claiming to offer therapy may be causing harm. Several US states have begun taking action. Last August, Illinois Governor JB Pritzker signed a law banning the use of AI in mental health care and therapy, except for administrative tasks.

In June, the Consumer Federation of America, along with several other organizations, requested that the U.S. Federal Trade Commission (FTC) investigate AI companies allegedly practicing medicine illegally through character-based AI platforms, specifically naming Meta and Character.AI.

Although platforms often include disclaimers stating that these are not real experts, chatbots can still answer confidently, even inaccurately. In some cases, bots even claim to have professional licenses or training — which is completely untrue.

According to the American Psychological Association (APA), the level of 'delusional' yet absolute certainty displayed by chatbots is concerning.

The risks of using AI instead of real therapy.

A qualified therapist must adhere to confidentiality guidelines and be subject to oversight by the licensing authority. If they cause harm, their license may be suspended or revoked. Chatbots, however, are not subject to such restrictions.

Furthermore, AI is designed to maintain interaction. It will try to keep you in the conversation, rather than focusing on a specific therapeutic goal. This may give the feeling of being heard, but it doesn't translate into real progress.

Another major risk is the tendency toward 'over-agreement'. Research from Stanford shows that chatbots are prone to becoming sycophantic, meaning they agree with users even when they shouldn't. In real therapy, besides support, challenging dialogue is needed to help patients re-examine their false beliefs, delusions, or extreme thoughts. A chatbot that only nods can make the situation worse.

The risks are even higher for people with disorders such as schizophrenia or bipolar disorder. Experts warn that AI could reinforce distorted thinking instead of helping to correct it.

More importantly, therapy is not just about talking. It involves building rapport, understanding the context, applying specific methods, and monitoring long-term progress—things that current AI cannot replace.

If we still want to use AI, how can we do it more safely?

The shortage of mental health professionals and the "loneliness epidemic" have led many to turn to AI as a temporary solution. However, experts recommend that the preferred option should still be a professionally trained individual.

In the event of a crisis in the US, people can call 988 Lifeline for free and secure 24/7 support.

If you want to try therapeutic chatbots, you should choose tools developed by psychology professionals and specifically designed for that purpose, rather than general-purpose chatbots. However, this technology is still very new and lacks clear monitoring mechanisms.

The most important thing is not to confuse AI confidence with real competence. A seemingly reasonable answer doesn't necessarily mean sound advice. A chatbot might make you feel understood, but that doesn't guarantee it's guiding you in a healthy direction.